Google March 2026 core update: What you need to know and how to adapt

The Google March 2026 core update began rolling out March 27. Learn what changed, which sites are affected, how AI Overviews...

Generative design has moved from experiment to everyday workflow. Marketers and designers use models to create images, layouts, and concepts at a pace human teams cannot match on their own.

The risk is that this new volume quietly changes your visual standards in ways no one intended. This article shows how to build AI-ready brand systems, guardrails, and measurement so generative design helps you scale while protecting your identity.

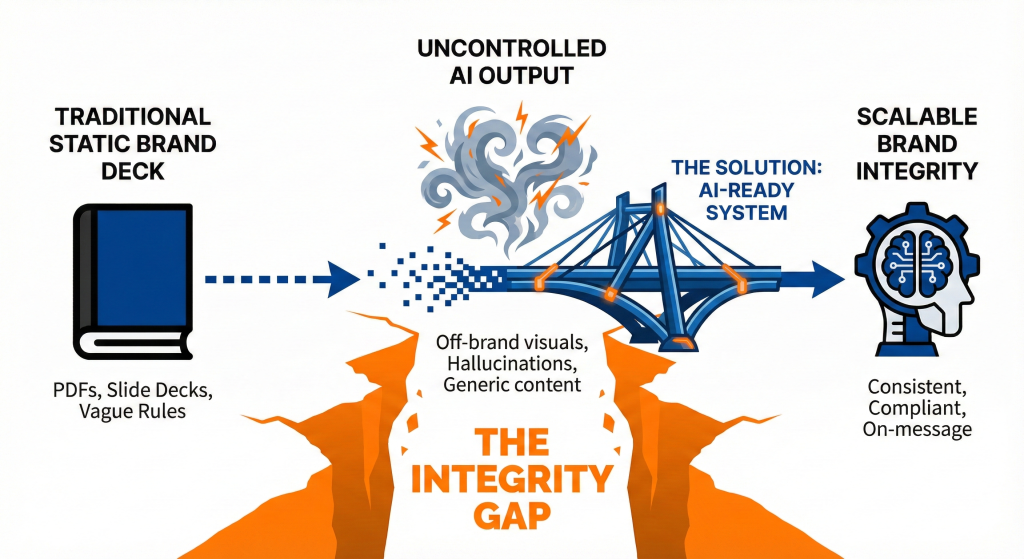

Generative AI threatens brand integrity when teams plug tools into old guidelines, skip governance, and let models improvise without clear constraints. To keep control, you need to understand where AI breaks visual and verbal patterns, then design systems, training data, and review workflows that keep every new asset recognisable, compliant, and on message.

When teams adopt generative design quickly, they often do it on top of static brand decks. In one survey, up to 96 percent of marketing departments had deployed AI for at least one project, yet traditional brand guidelines were not designed for AI use. Monigle notes that these decks lack the structure models need to preserve identity at scale.

The scale of the risk is high. A 2023 study found that 73 percent of US marketers had already used generative AI tools in their work, and about 36 percent of organizations use generative AI to produce images. As more content comes from models, the chance of off-brand visuals, biased imagery, or generic output rises.

Consumers notice. Research cited by Averi shows that 77 percent of people say they can identify AI-generated content and 68 percent trust it less than human-created content. If your AI visuals feel cheap or random, that drop in trust lands on your brand, not on the tool.

You can see these risks in public examples. A Business Insider review of Coca Cola’s 2025 AI-generated holiday ad highlighted glitches and inconsistent visuals. Commentators questioned whether these quality issues could harm brand trust.

Adobe’s Digital Trends 2025 report shows the other side. Among practitioners who already see ROI from generative AI, 50 percent expect better communication consistency in the next two years. That suggests AI can strengthen brand integrity when it runs inside strong systems instead of scattered experiments.

Generative design is not the problem on its own. The problem is generative design without clear guardrails, AI-ready brand rules, and defined thresholds for what “on brand” means at scale.

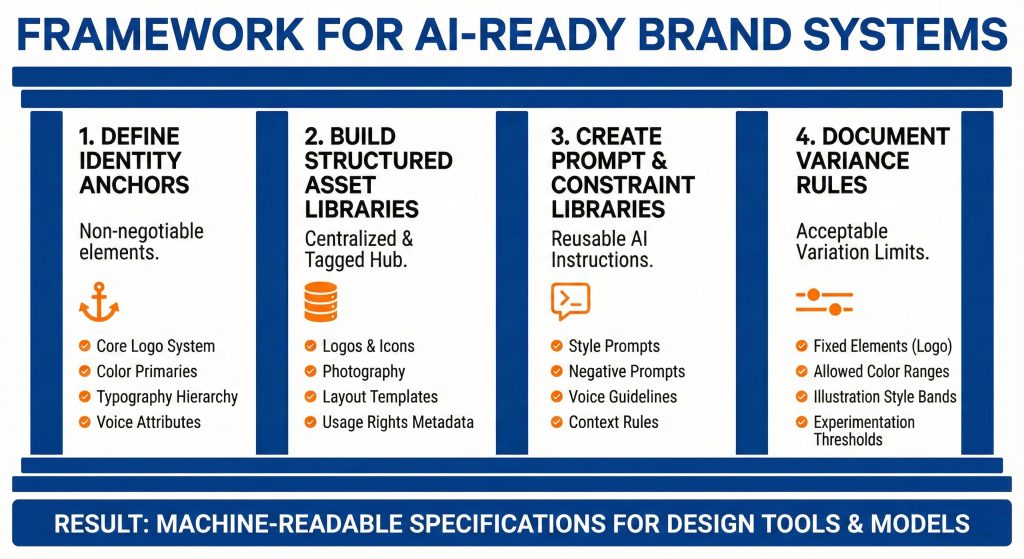

You cannot maintain brand integrity with generative design if your only source of truth is a static brand book. To guide AI tools, you need an AI-ready brand system, structured assets, tokens, and examples that models can use as training data, prompts, and constraints across every workflow.

Most brands still rely on a long PDF or slide deck with colors, logos, and a few do and do not examples. That format helps humans, but models need structure. Monigle argues that brands must convert guidelines into machine-readable systems. This means turning colors into tokens, mapping typography to clear rules, and tagging assets with usable metadata.

Platforms like Typeface and Frontify show how this works in practice. Typeface uses a Brand Hub and Brand Agent that translate guidelines into active rules for content and visuals. Frontify adds AI guardrails directly in a brand management system so teams stay within approved ranges on tone, imagery, and logo usage.

A simple way to think about this is that your brand system needs to answer three questions for AI tools.

“When we turn brand decks into AI-ready systems, the biggest shift is clarity. Designers, marketers, and models all pull from the same rules instead of guessing.”

Georgia Callahan, Executive Creative Director

You can use a four-part model to make your brand guidelines ready for generative design.

When Launchcodex builds AI systems for clients, this is often the first step. The brand work becomes a technical specification that design tools, generative models, and content pipelines can use directly.

| Approach | Who it fits | Key strength | Watch out for |

|---|---|---|---|

| Static brand deck | Small teams, low AI adoption | Simple to share and understand | Hard for AI tools to use, high risk of inconsistent use |

| AI-ready brand system | Growing and enterprise brands | Machine-readable rules and assets for AI tools | Higher setup effort, needs ongoing governance |

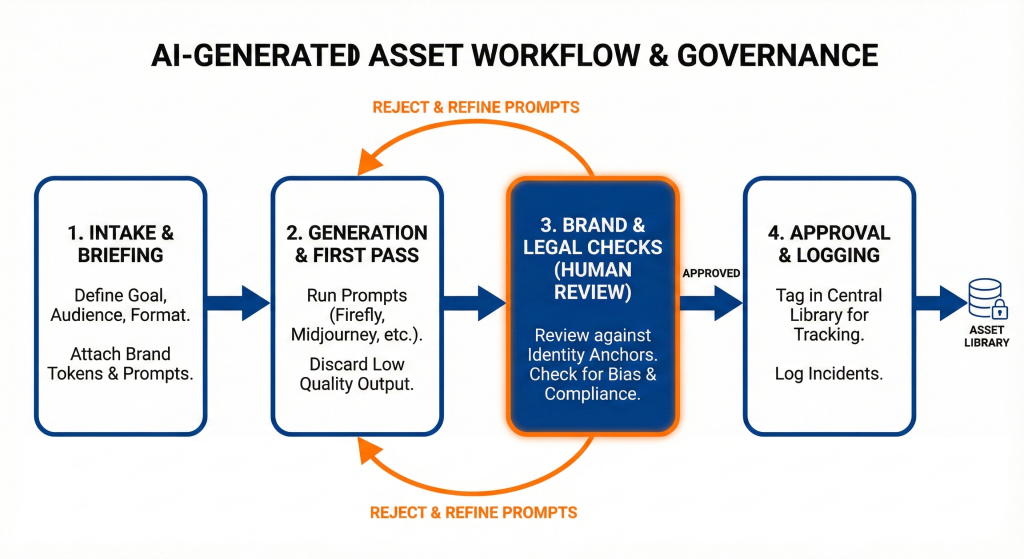

Even with strong brand systems, you will lose integrity if generative design runs outside normal governance. You need clear guardrails, approvals, and incident processes so AI outputs go through the same scrutiny as human work, with extra checks for risks like hallucinations, bias, and synthetic content misuse.

The IAB reports that over 70 percent of marketers have encountered at least one AI-related incident in advertising, such as hallucinations, bias, or off-brand content, yet less than 35 percent plan to increase investment in AI governance or brand integrity oversight.

Frontify frames AI for brand management as a governance-first question. They show that AI helps large organizations reduce brand risk when it automates guardrails. Aprimo also stresses that AI should amplify human judgment, not replace it. That only happens when approvals and editorial review stay in place.

Your goal is to embed generative design into existing workflows instead of letting it live in side projects.

“When we add AI to creative workflows, we treat it like any other system. If it cannot pass review, it does not ship, and we log why.”

Derick Do, Co-Founder and Chief Product Officer

You can use a simple four-step process to keep generative design under control.

For multi-location or franchise brands, this workflow can sit inside a central portal where local teams request assets instead of generating them alone. That keeps large networks aligned while allowing local nuance.

Generative design becomes more reliable when models see real examples of your brand. By curating training sets, using brand hubs, and feeding models structured prompts, you move from random experimentation to outputs that carry your visual and verbal DNA into every new asset.

Tools like Typeface, Averi, and Corebook AI show that training AI on real brand data is often the fastest way to reduce drift. Averi learns from edits and approvals so copy stays closer to your real voice. Typeface builds an internal Brand Agent that uses your assets and rules to guide every new piece of content.

If your model only sees public internet data, it will default to generic styles. That is how you get imagery that looks like every other brand in your category.

Lucidpress research shows that brand consistency across channels can increase revenue by 10 to 33 percent. That is a strong case for training AI to reproduce your distinct patterns instead of generic ones.

You can structure a training set for generative design with four main asset types.

If you do not measure brand consistency, you cannot know whether generative design is helping or hurting you. Set up clear metrics, from recall and trust to revision rates and time to asset, and use them to adjust prompts, rules, and workflows as AI adoption grows.

Nielsen’s research on emerging media campaigns found that brand recall accounted for 38.7 percent of brand lift, more than baseline awareness. That means recognisability is tied directly to business results.

At the same time, many organizations still treat AI as a side experiment without clear metrics. HubSpot reports that 85 percent of marketers believe generative AI will transform content creation. Without measurement, transformation can include both gains and quiet damage.

You want to know not only how much faster generative design makes you, but also whether it protects or improves your brand metrics.

You can track a mix of qualitative and quantitative signals.

Maintaining brand integrity with generative design also means managing regulatory, ethical, and reputational risk. You need clear policies on data use, disclosure, and synthetic content, plus a responsible AI framework that defines how humans and models share work across your brand ecosystem.

As AI-generated visuals spread, regulators and watchdogs are paying more attention to synthetic content, privacy, and misinformation. Brands that operate in regions covered by GDPR or CCPA must treat personal data in training sets with care, especially when faces or real locations appear.

Consultancies such as Accenture note that concerns about brand integrity and job loss have pushed some global brands, including Apple and Wells Fargo, to restrict generative AI use. DAC Group highlights that this often reflects missing governance rather than a lack of potential value.

If your guidelines only live in a static PDF or slide deck, they are not ready for AI. You need structured assets, tokens, prompts, and variance rules that tools and workflows can use directly. Start by turning colors, typography, and logos into a design system, then build prompt and asset libraries on top.

Tools that sit inside your existing design and brand stack tend to be safer. Examples include Adobe Firefly inside Creative Cloud, brand-specific platforms like Typeface or Frontify, and internal systems that use your own models. What matters most is governance, training data, and integration with your brand hub.

Any AI-generated asset used in paid campaigns, core web pages, or major emails should be reviewed every time. For lower-risk channels, such as internal decks, you can apply spot checks. At a system level, review your prompts, rules, and training sets at least once a quarter.

Yes, but the implementation will be lighter. Smaller teams can still define identity anchors, store assets in a shared folder or simple DAM, and use a short prompt library. The key is to keep AI usage intentional and measured, not random.

The Google March 2026 core update began rolling out March 27. Learn what changed, which sites are affected, how AI Overviews...

Value-based bidding tells Google Ads how much each conversion is worth so the algorithm spends more to win the customers who...

A staged, practical guide mapping RAM, vCPU, and storage requirements to four website growth stages. Includes specific bench...