Google March 2026 core update: What you need to know and how to adapt

The Google March 2026 core update began rolling out March 27. Learn what changed, which sites are affected, how AI Overviews...

AI answers now influence discovery and evaluation across search and chat experiences. Google’s CEO said AI Overviews reach 1.5 billion users per month, which makes AI visibility a real channel, not a trend to watch later.

The measurement problem is clear. Search Console does not show whether you appear in AI Overviews or whether you get cited inside AI chats, so you need purpose-built tracking and a repeatable process. You will learn what to measure, which tools to consider, and how to turn tracking into actions that support qualified leads and pipeline.

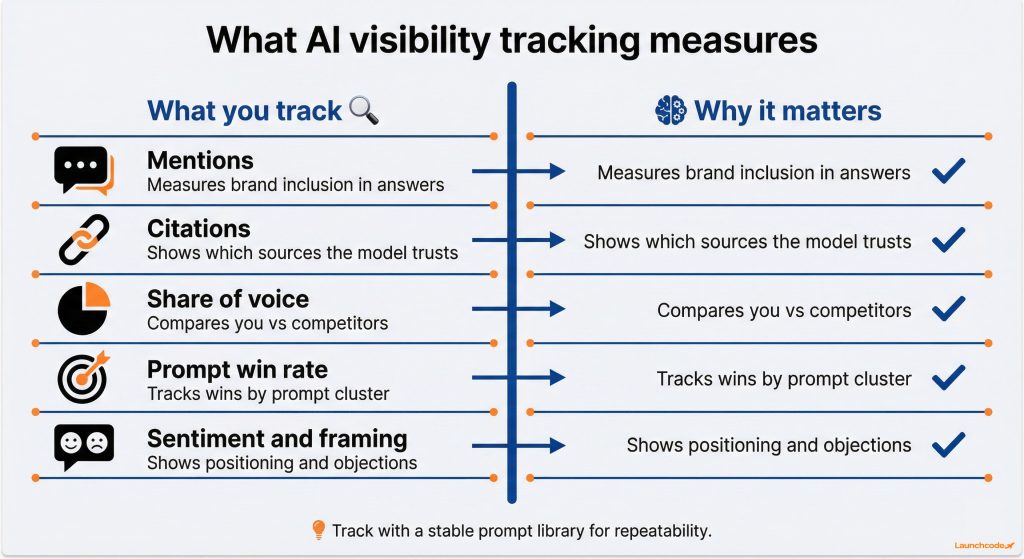

AI visibility tracking measures whether your brand and your pages appear inside AI-generated answers, how often you are cited, and how that changes over time across a consistent set of prompts tied to buyer intent. It complements keyword rankings, but it focuses on inclusion, citations, and competitive share of voice.

Clicks alone can miss upstream shifts. Pew’s analysis found that users clicked a traditional result in 8% of searches with an AI summary, versus 15% on pages without one, and clicks on links inside summaries were about 1% of all visits. That makes “being cited or mentioned in the answer” a measurable objective, not a soft brand metric.

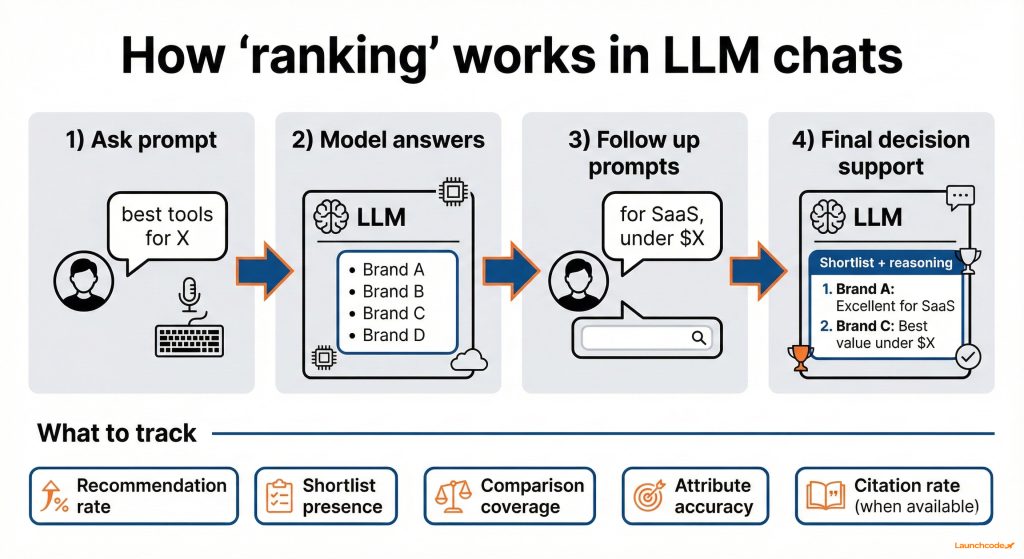

In LLM chats, you rarely “rank” as a blue link. You either get included, cited, recommended, or compared, and the model’s wording can move buyers closer to a shortlist without a click. These tools track that output layer across ChatGPT-style answers, Gemini, Copilot, and Perplexity by running controlled prompts and measuring who gets named and which sources get referenced.

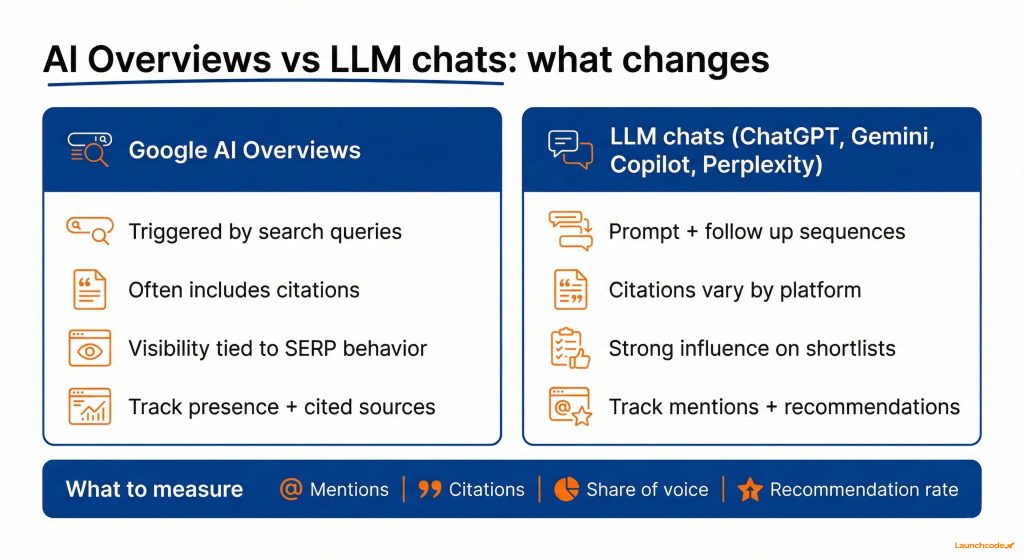

There are two practical differences versus AI Overviews:

This is where most teams get better results by clustering prompts by funnel stage and keeping them stable for at least four weeks.

Search Console is still essential, but it does not report AI Overview presence or citations, so it cannot answer the core question: are you showing up in AI answers for your category prompts. This is why third-party tools exist, especially for Google AI Overviews monitoring and multi-platform LLM visibility.

Search Engine Journal has called this out directly, including the point that Search Console does not provide an AI Overview presence dimension and does not track citations inside AI Overviews.

AI answers change due to model updates, prompt phrasing, location, and time. Treat tracking as directional measurement. Reduce noise with stable prompts, repeat runs, and a change log that notes major site updates and known model shifts.

“Most teams lose trust in AI reporting when they change prompts every week. Lock the prompt set first, then measure and iterate.”

Natalie Brooks, Executive Assistant

The best LLM tracking tool matches your target platforms, produces repeatable measurements, and fits your operating rhythm so insights lead to shipped work. These five options cover most needs, from multi-platform visibility to Google AI Overviews specific tracking.

| Tool | Who it fits | Key strength | Watch out for |

|---|---|---|---|

| Ahrefs Brand Radar | Teams that want a broad, multi surface view | Competitive visibility reporting across AI platforms | Best fit if you already use Ahrefs workflows |

| Semrush One | Semrush users and SEO teams | AI visibility inside an SEO suite with prompt tracking | Suite depth can be heavy for small teams |

| Otterly.AI | Marketing teams that want monitoring fast | Straightforward brand mentions and citation monitoring | Validate sampling frequency and evidence exports |

| Nightwatch AI tracking | Teams that already use rank tracking | Familiar tracker style reporting with AI metrics | Confirm platform coverage for your buyers |

| SE Ranking AI Overviews Tracker | Teams focused on Google AI Overviews | AI Overviews citations and monitoring by keyword | Narrower focus if you need multi platform reporting |

Ahrefs Brand Radar fits teams that want a broad view of AI visibility with strong competitive context. It works well when you want topic-level reporting, prompt coverage across multiple AI experiences, and a way to compare your brand presence versus category competitors.

What it is good at:

What to validate in your trial:

Read Ahrefs Brand Radar details on the product page for platform coverage and reporting claims.

Semrush One fits teams that already operate inside Semrush and want AI visibility reporting in the same environment as SEO and competitor research. It is useful when you want prompt tracking plus reporting that can sit next to your existing keyword, content, and competitive workflows.

What it is good at:

What to validate in your trial:

Review Semrush One documentation for feature coverage and workflow details.

Otterly.AI fits teams that want to start monitoring mentions and citations quickly across major AI platforms. It is a practical choice when you need fast signal, clear reports, and a monitoring style workflow that supports content and PR iteration.

What it is good at:

What to validate in your trial:

See the Otterly.AI site for stated platform coverage and positioning.

Nightwatch AI tracking fits teams that already use rank tracking and want an AI visibility layer presented in a familiar format. It can be a good fit when you want visibility, share of voice, and related metrics inside a tracker-style workflow.

What it is good at:

What to validate in your trial:

Nightwatch explains its AI tracking metrics and approach on its AI tracking page.

SE Ranking AI Overviews Tracker fits teams that want Google AI Overviews visibility and citation tracking tied to a keyword set. It is strongest when Google Search is the core surface you care about and you need to see which sources AI Overviews cite for priority queries.

What it is good at:

What to validate in your trial:

SE Ranking’s AI Overviews tracker page outlines the product and the reporting gap it addresses.

Choose a tool by starting with your target platforms and your business goal, then score repeatability, evidence capture, and workflow fit. If your tool cannot save outputs and rerun prompts consistently, you will struggle to build trust in the numbers.

Score each tool from 1 to 5 in each category:

| Team type | Likely best fit | Why |

|---|---|---|

| SEO team already on Semrush | Semrush One | Keeps AI visibility inside existing workflows |

| Brand team that wants fast monitoring | Otterly.AI | Quick setup and clear mention and citation reporting |

| Team focused on Google Search shifts | SE Ranking AI Overviews Tracker | AI Overviews focused citations and monitoring |

| Team that wants broader competitive visibility | Ahrefs Brand Radar | Broad dataset and competitive reporting |

| Team that prefers tracker style reporting | Nightwatch AI tracking | Familiar tracking workflow with AI metrics |

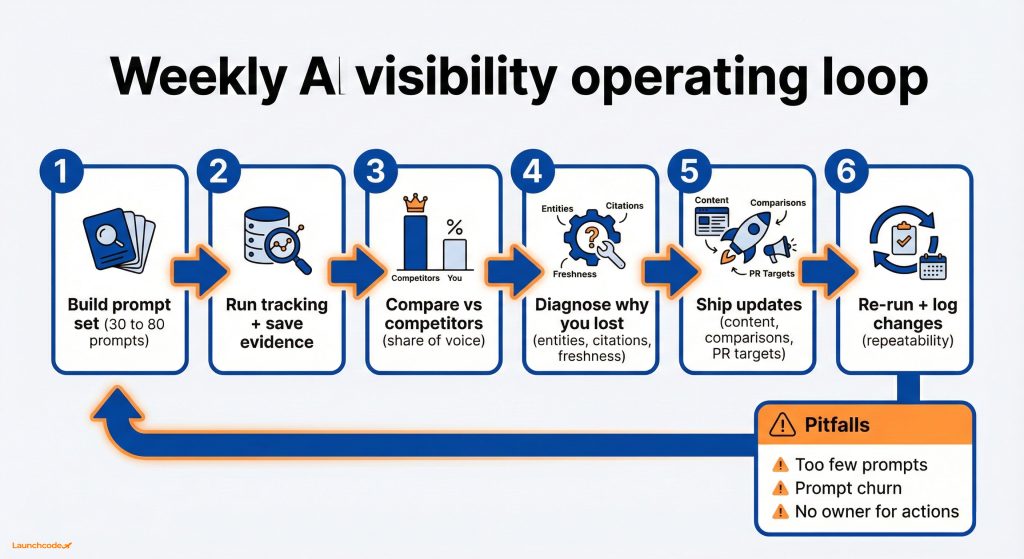

Tracking matters when it changes what you ship. Run a weekly visibility loop that ends with content updates, stronger citations, and clearer positioning for high-intent prompts. This keeps the work tied to outcomes, not dashboards.

“Visibility metrics only matter if they drive action. Your weekly review should end with a short list of updates that your team ships that week.”

Tanner Medina, Co-Founder and Chief Growth Officer

Launchcodex uses a similar weekly loop in SEO and GEO programs to connect AI visibility changes to page updates, citations, and pipeline. If you want that process as a managed system, explore our SEO services.

You can get useful tracking live in two weeks by starting small, controlling variance, and focusing on high-intent prompts that map to revenue. The goal is a clean baseline, a short action list, and a second run that confirms movement.

For examples of shipped work and outcomes, review our case studies.

An LLM tracking tool monitors how AI systems mention your brand and cite sources for a defined set of prompts. It reports mentions, citations, share of voice, and changes over time.

Not reliably. Industry reporting notes Search Console does not provide AI Overview presence or citation tracking, which is why AI Overviews trackers exist.

Start with 30 to 80 prompts tied to high intent topics. Keep them stable for at least 4 weeks so changes reflect real movement, not prompt changes.

AI outputs vary due to model updates, prompt phrasing, location, and timing. Reduce noise with repeat sampling, consistent prompts, and a change log.

Share of voice and citation rate tend to be the most actionable. They show whether you appear and whether AI systems trust your sources enough to reference them.

Map prompts to funnel stages, ship targeted page updates, and monitor assisted conversions and lead quality for the topics where visibility improves.

The Google March 2026 core update began rolling out March 27. Learn what changed, which sites are affected, how AI Overviews...

Value-based bidding tells Google Ads how much each conversion is worth so the algorithm spends more to win the customers who...

A staged, practical guide mapping RAM, vCPU, and storage requirements to four website growth stages. Includes specific bench...