How to measure creative performance beyond CTR

CTR only tells part of the story. Learn the full-funnel framework for measuring creative performance, from thumbstop rate an...

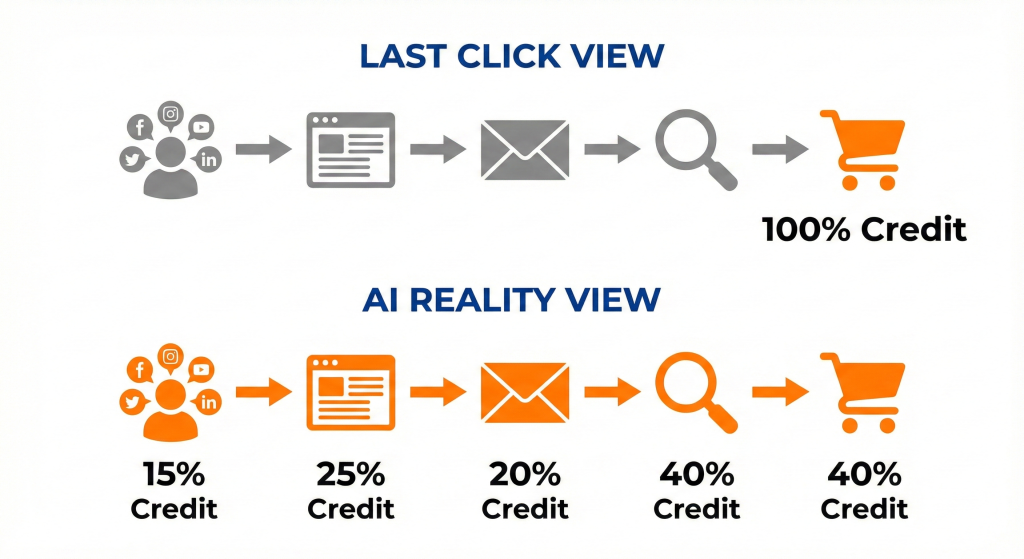

Last click attribution is simple and familiar, but it hides the impact of upper funnel and assist channels. In a world of long journeys, fragmented devices, and AI search, that simplicity turns into distortion.

In this article, you will learn what AI-driven attribution models actually do, how they differ from classic rules-based models, how they fit alongside marketing mix modeling and experiments, and how to roll them out without blowing up trust in your numbers.

Last click attribution gives one hundred percent of credit to the final touch before a conversion. That made sense when journeys were short, and tracking was simple. Today, research from Forrester and others shows that more than seventy percent of customers touch a brand multiple times before converting, so last click ignores most of what drives demand.

Ready to grow your organic traffic?

Get a free SEO audit from the Launchcodex team.

Recent surveys show how deep the problem goes. In a 2024 study by eMarketer and Snap, seventy eight point four percent of senior marketers spending over five hundred thousand dollars on digital ads still reported using last click and web analytics as their main way to measure media effectiveness. At the same time, multi-touch attribution adoption has hovered at roughly fifty to sixty percent of businesses over several years, which shows that many teams know they need more but have not fully moved away from last click.

Last click consistently over credits branded search, direct traffic, and bottom funnel retargeting. It under credits channels that create demand, such as paid social, partner activity, content, and top of funnel search. That distortion leads to budget shifts toward channels that harvest demand rather than create it.

In B2B, the effect is worse. Complex deals often involve content touches, outbound emails, partner referrals, events, and multiple decision makers. When your model only reports the final branded query or email click, it becomes almost impossible to show the value of early-stage programs.

Some practical signals include:

These patterns are strong clues that you need a richer model rather than more budget tweaks inside the same last click lens.

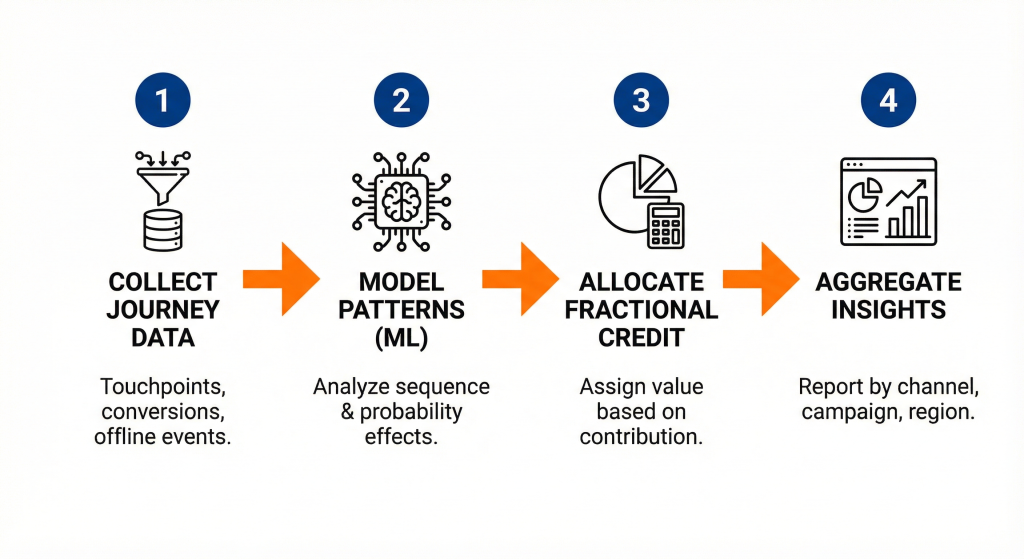

AI-driven attribution models use machine learning on your full set of touchpoints to estimate how each interaction changes the probability of conversion. Instead of splitting credit using fixed rules, the model learns from patterns in your data. That allows it to capture channel interactions, sequence effects, and diminishing returns in ways simple rule-based models cannot.

Academic work on this is no longer theoretical. A 2024 paper on intelligent attribution modeling reported that a Bayesian network model reached predictive accuracy near 0.95 for ecommerce conversion probabilities. Large platforms apply similar ideas at scale. Meta has described an AI-powered multi-touch attribution system that blends Shapley values, counterfactual modeling, and federated learning to measure incremental ad impact while protecting user privacy.

At a simple level, AI-driven attribution models:

The result is not perfect truth, but it is usually more honest than last click, especially in journeys where the last touch is rarely the main driver.

AI-driven attribution does need enough clean data to learn real patterns. That means:

For smaller B2B funnels or new products with limited data, the right move is often to start with simpler models and apply AI-driven attribution at higher aggregation levels, then validate with experiments.

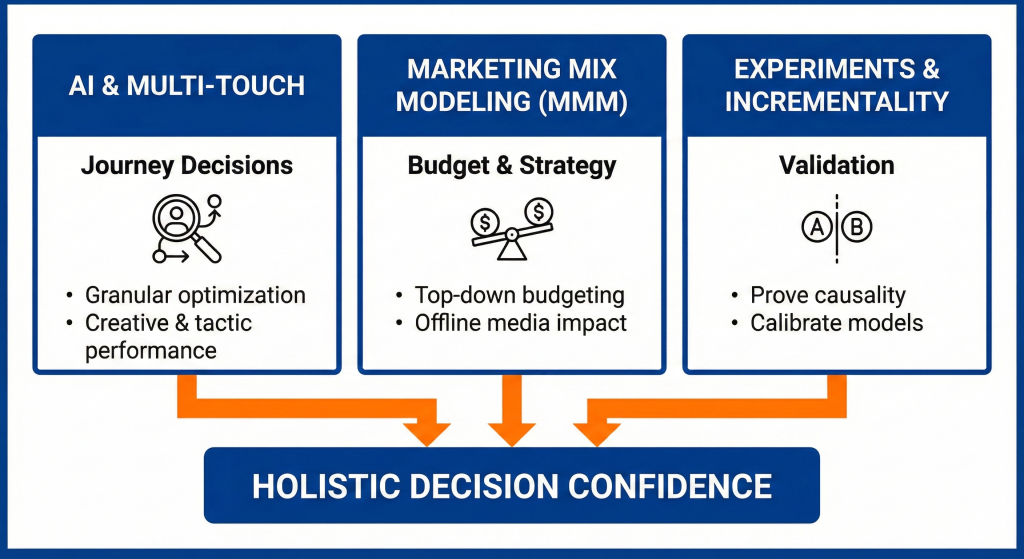

AI-driven attribution is not a replacement for every other measurement method. The strongest marketers combine user-level attribution, marketing mix modeling, and incrementality testing. Each method answers a different question, and together they give a more stable view than any single model.

Market research reflects this blended approach. Multi-touch attribution is now a market worth billions of dollars and is expected to grow at a compound annual rate above thirteen percent over the next few years. At the same time, modern marketing mix tools and experimentation platforms are becoming more accessible. The opportunity is to design a measurement stack that uses each tool for what it does best.

Multi-touch and AI-driven attribution shine when you need to understand how channels and tactics work together along the journey. They help you answer questions such as:

A simple way to use them in practice:

The goal is not to find a perfect answer, but to upgrade from one narrow view to a richer, more defensible picture.

Marketing mix modeling works at an aggregate level and uses historical spend and outcome data to estimate how channels drive revenue over time. It is especially useful when user-level tracking is limited by privacy rules or technical constraints.

In practice:

No attribution model should be accepted without checks. Controlled experiments help validate AI-driven models.

You can:

Practitioners like Tom Bukevicius often recommend using multiple views on performance and comparing them to blended efficiency metrics. That mindset keeps your approach pragmatic and reduces the risk of chasing model noise.

An effective AI-driven attribution program rests on a solid data foundation, the right tools for your stage, and clear workflows for how decisions will use the new signals. Technology is important, but structure and process matter just as much.

Teams that treat attribution as a one-time tool purchase often get stuck. The gains appear for a few months, then trust erodes as numbers change and no one can explain why. A better approach is to design the stack around business questions, then plug AI into specific points in that flow.

Before you add any AI driven attribution tool:

This work is not glamorous, but every AI model relies on it. If you skip it, the model will amplify existing tracking issues rather than fix them.

Tool choice should reflect your channel mix, data maturity, and resources.

When you already invest in AI and automation, such as systems that route leads or manage campaigns, AI-driven attribution becomes another object inside that system. For example, Launchcodex often treats model outputs as one more data layer inside a broader growth operating system rather than a disconnected report.

For B2B and SaaS:

For ecommerce:

In both cases, the important part is the workflow. Decide where in your planning and optimisation process the AI model will inform decisions, and document that process.

Privacy regulations and platform changes are reshaping what data you can collect and how you can use it. At the same time, AI search and answer engines introduce zero-click journeys that rarely show up as a clean sequence of tracked clicks. AI-driven attribution must account for both trends if it is going to be useful.

Industry commentary from groups like Market Science and LeadsRx highlights a common pattern. Teams that combine strong first-party data with AI-driven models are better positioned to maintain measurement quality while staying compliant with rules such as GDPR and CCPA. Those that rely on old third-party tracking patterns will see their models degrade over time.

A privacy-aware approach focuses on:

For attribution, that means:

AI-driven models can handle noisy or incomplete data better than simple rules, but they still need a base of consistent, lawful inputs.

AI search and answer engines shift many early interactions off your site. A prospect may read an answer in a generative search result, later see your brand mentioned in a roundup, and only convert after searching your name directly. Last click only sees the branded search. Even a strong click-based multi-touch model may struggle.

To adapt, you can:

When you publish content on topics like AI SEO and AI workflows for marketing, internal links between those resources and your attribution article help both readers and AI systems understand the relationship between discovery channels and conversion measurement.

Rolling out a new attribution model changes reported performance by channel. That can disrupt relationships with finance, leadership, and channel owners who have built plans around last click numbers. The rollout plan matters as much as the model choice.

Performance leaders who navigate this well treat attribution as a decision support system rather than a single source of truth. They introduce models gradually, check them against experiments, and use multiple views on performance to build confidence.

A practical rollout plan:

By framing the model as a way to improve decisions on specific questions, you reduce the risk of it feeling like a threat to stakeholders.

Model details matter, but most stakeholders care about clarity and consistency. When you present AI-driven attribution outputs:

Tom Bukevicius describes this mindset as layering models and comparing views against blended metrics. That framing helps non-technical leaders see attribution as an input to judgement, not a replacement for it.

When moving beyond last click, avoid these traps:

A disciplined rollout and a clear communication plan turn AI-driven attribution from a risky change into a structured upgrade to your measurement system.

Last click attribution made sense when journeys were short and tracking was simple. Today, it hides the real drivers of demand and leads to poor allocation decisions. AI-driven attribution models offer a more honest view by learning from full journeys, working with modeled data, and fitting into a broader system that includes MMM and experiments.

The path forward is to treat attribution as a layered system. Strengthen your first-party data, run AI-driven models alongside existing views, validate with experiments, and use the combined insights to adjust your channel mix. If you want support building that kind of measurement system, a partner like Launchcodex can help design the data foundation, models, workflows, and case study angles so attribution becomes a daily decision tool rather than a static dashboard.

No. While very complex models need more data, many vendors now offer AI-driven attribution that works well at channel or campaign group level for mid-sized budgets. The key is to match the model’s granularity to your data volume and validate it with simple tests.

Google Ads data-driven attribution is a specific implementation inside one platform. Broader AI-driven attribution often spans multiple channels, includes offline data, and may use more advanced or custom modeling techniques. Both use machine learning, but cross-channel AI models give a wider view of your marketing mix.

You can still benefit from AI-driven attribution by modeling at higher levels, such as channel or campaign group, and focusing on qualified pipeline or revenue rather than lead volume. Combine those results with MMM and a few well-designed experiments to cross-check the story.

Privacy rules reduce the amount of raw user-level data you can collect, but they do not stop attribution. Instead, you shift toward stronger first-party data, aggregation, and modeled signals. AI models are well-suited to this environment as long as they work on consented data and respect legal constraints.

You can often see directional insights within one to three months of running an AI-driven model in parallel with last click. The key is to frame up front which decisions you want to improve, such as reallocating spend between search and social, and to track the impact of changes driven by the new view.

CTR only tells part of the story. Learn the full-funnel framework for measuring creative performance, from thumbstop rate an...

Learn how to build a scalable creative system with five clear layers: brand governance, modular production, AI-assisted exec...

Learn how to build a repeatable creative testing framework for paid ads. Covers hypothesis writing, ABO vs. CBO structure, c...