LLM search volume: What it is, how to track it, and whether it's worth your attention

No AI platform publishes LLM query data. Learn what LLM search volume actually means, how to approximate it using free and p...

Every marketing team now gets the same question from leadership: how do we show up in ChatGPT? Answering it requires a new mental model because the tools and metrics built for traditional search do not apply to AI platforms. There is no keyword planner equivalent. The volume exists, but it sits inside proprietary systems that were not built to share it.

This article explains what LLM search volume is, why no direct metric exists, and how to approximate it with methods ranging from free to enterprise-grade. It gives you a clear verdict on whether tracking it belongs in your reporting stack today, and what to start doing first.

LLM search volume refers to the estimated frequency of prompts users submit to large language models on platforms such as ChatGPT, Perplexity, Google AI Overviews, and Gemini. Unlike traditional search, no AI platform publishes this data. There is no keyword planner for ChatGPT. The volume exists inside proprietary systems that were not built to share query frequency, so marketers have no direct equivalent to the monthly search volume numbers they pull from Ahrefs or Google Keyword Planner.

Ready to grow your organic traffic?

Get a free SEO audit from the Launchcodex team.

Traditional search volume metrics work because search engines log every query, index the exact phrase, count the frequency, and make that data accessible to third-party tools. AI platforms do not operate this way.

LLMs are probabilistic systems, not indexed databases. Two users asking the same question in slightly different ways can receive different responses. The same user asking the same prompt twice may get a meaningfully different answer. As LLM optimization research from Search Engine Land explains, LLM interactions are conversational and variable, which makes the stable, repetitive patterns that traditional volume metrics rely on much harder to establish at small sample sizes.

There is also a structural access problem. Google introduced Keyword Planner because it served the ad business model. AI platforms have no equivalent commercial incentive to publish prompt frequency data. OpenAI, Google, and Anthropic do not surface how often any specific topic is queried.

Scale context matters before you decide whether to act. LLM-based search accounts for approximately 5.6% of US desktop search traffic as of June 2025, up from roughly half that figure a year earlier, based on an analysis of LLM vs traditional search trajectories from TTMS. Google still processes around 373 times more queries than ChatGPT, and all AI tools combined made up less than 2% of the global search market in 2024, per SparkToro research covered in Webflow's breakdown of AI's impact on search.

That context matters. But the growth rate changes the calculation. AI-referred sessions grew 527% year-over-year between early 2024 and early 2025, according to Previsible's 2025 AI Discovery Report, which analyzed 1.96 million LLM sessions across twelve months. The absolute volume is modest. The trajectory is not.

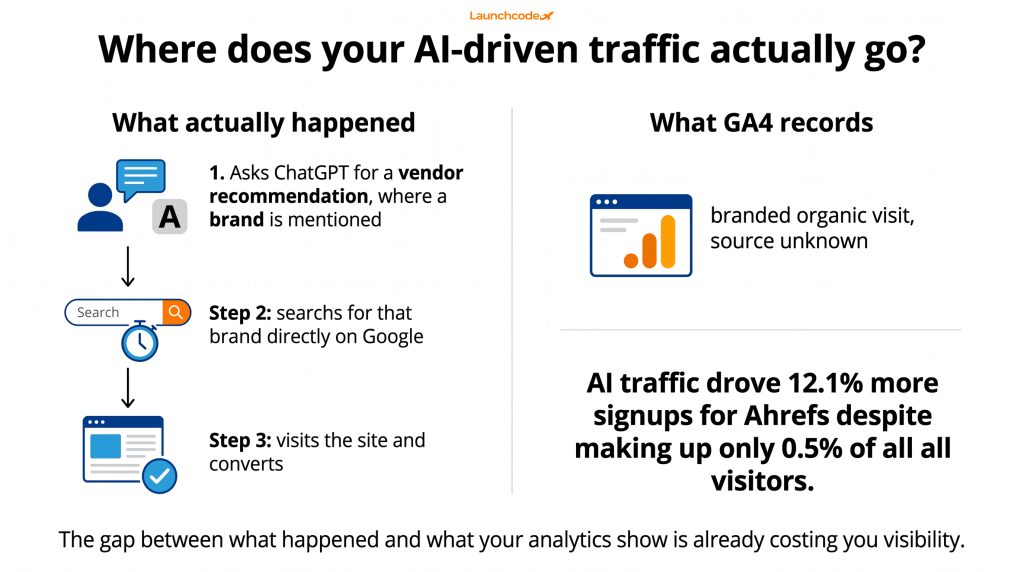

A significant share of AI-influenced visits never appear in standard LLM traffic reports. When a user discovers a brand through ChatGPT and visits the site later, that session typically registers as direct traffic in GA4. Many LLMs also strip referral data before passing the user to a destination page. Teams relying on default analytics are already undercounting the business impact of AI search, often by a large margin.

Ahrefs documented this directly. AI traffic drove 12.1% more signups for Ahrefs despite making up only 0.5% of all visitors. That gap reflects both the high-intent nature of LLM users and the attribution problems that hide their actual volume.

You can establish a meaningful baseline without any paid tool. In GA4, navigate to the Explore section, create a blank report, and set Session source/medium as the primary dimension. Add a session segment using a regex filter that matches known LLM referrers, including chat.openai.com, perplexity.ai, claude.ai, and gemini.google.com. Switch to a line graph to visualize trends over time.

This segment will not capture all LLM-driven business. Visits that arrive as direct traffic after an AI interaction will remain invisible. But it gives you a tracked baseline from today forward. A practical complement is adding "AI tool (such as ChatGPT or Perplexity)" as a source option in your lead intake forms. Many users will mention it voluntarily, and that self-reported data costs nothing to collect.

AI-driven visits often behave like brand search: the user arrives with intent already formed, but the source that created that intent is invisible in your reporting. A prospect asks ChatGPT to recommend a vendor, gets an answer, notes the brand name, and searches for it directly two days later. GA4 records a branded organic visit. The AI recommendation that started the process is not captured anywhere.

"We started tracking how clients describe their lead sources during onboarding. ChatGPT and Perplexity come up more often than most people expect, and those leads tend to move through intake faster. The attribution gap is real." Brittany Charles, SVP Client Services, Launchcodex

Gartner estimates traditional search volume will drop 25% by 2026 as users shift queries to AI chatbots and virtual agents, based on Meltwater's guide to tracking LLM visibility. If that shift is already underway and your analytics do not reflect it, your understanding of where growth actually comes from is incomplete.

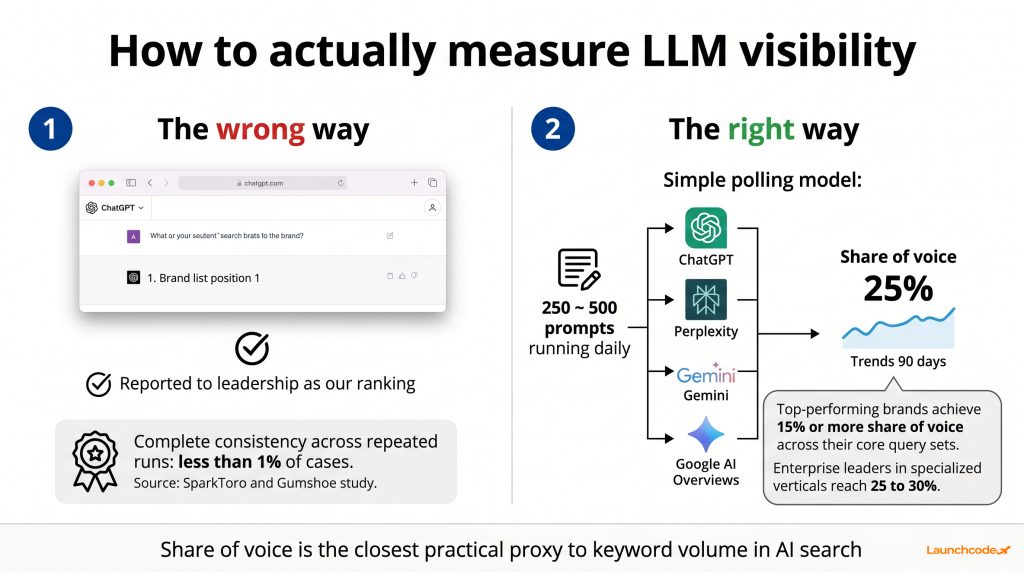

The practical replacement for keyword search volume in AI search is share of voice: the percentage of AI-generated answers that mention your brand across a defined set of category queries. You define a representative prompt set, run it repeatedly across multiple platforms, and record when your brand appears versus competitors. Over time, this gives you a statistically stable visibility score that works like market share in AI search.

This is the most actionable proxy available, and it is the metric the leading tracking tools are built to measure.

Rand Fishkin of SparkToro ran large-scale tests of LLM brand recommendations using automated scripts and found that individual AI responses are highly inconsistent. In tests across ChatGPT, Claude, and Gemini using the exact same brand recommendation prompt, complete consistency appeared in fewer than 1% of cases. His finding, covered in this analysis of AI visibility and GEO strategy: measurement must be statistical at scale, not based on individual responses.

A screenshot of one ChatGPT response is not your position. It is one data point in a probability distribution. What matters is how often your brand appears across hundreds of repeated runs of the same prompt.

Leading tracking tools use a methodology borrowed from election forecasting. You define a set of 250 to 500 high-intent queries for your brand or category. These run daily or weekly across target platforms. The tool records when your brand appears as a citation (a linked source) or a mention (a brand reference without a link). Over time, aggregate sampling produces statistically stable estimates of share of voice.

Top-performing brands capture 15% or more share of voice across their core AI query sets. Enterprise leaders in specialized verticals reach 25 to 30%, according to this LLM tracking tool guide from Nick Lafferty. If you are just starting, your initial benchmark number matters less than the direction of change month over month.

"The first thing we establish with any new GEO client is a share of voice baseline on their ten most important category prompts. That number is your starting point. Everything else, content, citations, third-party coverage, is measured against it." Tanner Medina, Co-Founder and CGO, Launchcodex

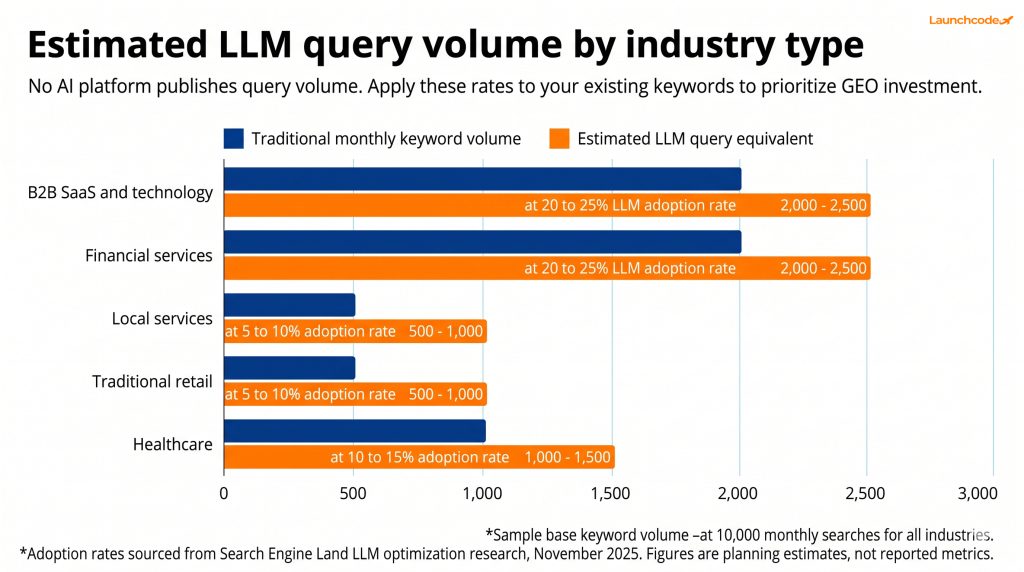

You can estimate relative LLM query volume for any topic using existing organic keyword data. Apply an AI adoption rate to your current keyword volume to get a directional sense of how many queries in your category are likely flowing through AI platforms. This does not produce an exact number. It prioritizes which topic areas deserve GEO content investment before you commit budget to dedicated tracking tools.

The LLM optimization research from Search Engine Land recommends applying a 20 to 25% adoption rate to keyword volume in high-AI-adoption industries such as SaaS, B2B technology, and financial services. For slower-moving sectors such as local services or traditional retail, start with 5 to 10%.

A practical example: a keyword with 10,000 monthly searches in a B2B SaaS category likely corresponds to 2,000 to 2,500 LLM-based queries in that topic area per month. This is a planning estimate, not a reported metric. It helps teams identify where to focus content investment before spending on software.

Before investing in any tool, run a manual baseline. Pick five to ten priority queries for your brand category, for example "best [your category] tools," "alternatives to [competitor]," or "top platforms for [specific use case]." Ask those prompts across ChatGPT, Perplexity, and Gemini once per month. Record whether your brand appears, in what context, and with what sentiment. Store results in a shared spreadsheet with date, platform, and citation status.

This process takes about two hours per month and produces trend data after three to four cycles. It is not scalable, but it tells you whether AI visibility should be a priority investment before you commit budget to tools.

If you want to understand which of your pages AI platforms are already citing, our guide to Bing Webmaster Tools and AI citation reporting walks through a free method for identifying citation patterns using Bing's own data.

The LLM tracking tool market grew substantially through 2024 and 2025, with over $77 million in collective funding during a single four-month window. Most tools use the polling model: fixed prompt sets run repeatedly across platforms to produce share of voice scores. The right tool depends on team size, budget, and whether you need agency-level multi-client management or a single-brand dashboard.

This category is still maturing. Platform coverage, pricing, and accuracy vary across tools. Verify current features before committing to any subscription.

| Tool | Best for | Platforms covered | Starting price (approx.) |

|---|---|---|---|

| Profound | Enterprise brands | ChatGPT, Claude, Gemini, Perplexity | ~$99/month and up |

| OtterlyAI | Marketing teams | ChatGPT, Gemini, Copilot, Perplexity, AI Overviews | Tiered, free trial |

| Peec AI | Content strategy and gap analysis | ChatGPT, Perplexity, Gemini, Claude | Tiered plans |

| LLMRefs | SEO teams moving into GEO | ChatGPT, AI Overviews, Perplexity, Gemini, Grok | Free tier available |

| Semrush AI Visibility Toolkit | Existing Semrush users | ChatGPT, AI Overviews, Gemini, Perplexity | $99/month add-on |

| SE Ranking AI Search Toolkit | SEO professionals | AI Overviews, AI Mode, ChatGPT, Gemini, Perplexity | Included in plans |

| Mangools AI Search Watcher | Freelancers and agencies | ChatGPT, Gemini | Tiered, affordable |

Multi-platform coverage matters most. A tool that tracks only ChatGPT gives an incomplete picture of AI search visibility. Look for tools that run each prompt multiple times per measurement period rather than once, since single-run results are not statistically reliable.

Profound integrates with GA4 for revenue attribution, which is valuable for executive reporting. For agencies managing multiple clients, LLMRefs and OtterlyAI both support multi-brand project structures. For teams already using Semrush, the AI Visibility Toolkit integrates directly into an existing workflow and monitors over 100 million LLM prompts globally, including more than 90 million US prompts and a ChatGPT database of 29 million prompts.

Yes, with conditions. LLM traffic volume is still small in absolute terms. But the conversion quality is significantly higher than organic search, the growth rate is accelerating, and teams building measurement infrastructure now will be ahead of the market when scale arrives. The question is not whether to track it, but at what investment level, based on your current SEO maturity and industry.

The traffic-to-value ratio for LLM referrals is unusual. ChatGPT referrals convert at 15.9% compared to Google organic at 1.76%, based on an AI SEO statistics roundup from Position Digital drawing on Seer Interactive research. That is roughly a 9x difference. LLM visitors overall convert 4.4 times better than organic visitors, per Semrush data from July 2025.

Alisa Scharf, VP of SEO and AI at Seer Interactive, explains the reason: "This isn't a user who searched a random keyword and accidentally clicked on your site. This is somebody who engaged in a conversation with an AI assistant about your product or services. These are high-value users."

The volume is low. The intent is high. And a user who discovers your brand through an AI conversation, researches it, and then converts often appears in your analytics as a direct visit. The business impact is already larger than most reporting suggests.

Research across 75,000 brands by Ahrefs, detailed in a Virayo analysis of LLM SEO factors, found that branded search volume is the strongest predictor of LLM citation likelihood, with a correlation of 0.334, outweighing traditional backlinks. Separately, 88% of URLs cited by ChatGPT come directly from search results. Strong organic rankings feed AI visibility. LLM optimization is not a replacement for SEO. It is an extension of it.

Kevin Indig, growth advisor and former head of SEO at Shopify and G2, frames the measurement shift clearly in his work with G2 on AI brand visibility: "Most board decks show AI citation volume, but that's not enough. The boards asking the right questions are tracking sentiment drift and message pull-through: is AI saying what we want it to say about us, and is that narrative moving deals forward?"

Teams that invest in brand authority, earn third-party coverage, and rank well organically are already building the signals LLMs use to decide who to cite. Tracking LLM visibility adds a measurement layer on top of work you may already be doing.

LLM search volume is not a metric you pull from a dashboard. It is a behavior you approximate through proxies, and the sooner you build those proxies into your reporting, the more useful the data becomes over time.

Three actions your team can take this week without any new budget:

If you want to go further, our GEO services are built to improve brand visibility across AI search platforms, from content structure to citation strategy to ongoing share of voice tracking. The same structured approach we apply to traditional SEO extends directly to AI search. The metrics are different. The discipline is the same.

LLM search volume refers to the estimated frequency of queries users submit to large language models such as ChatGPT, Perplexity, and Google AI Overviews. No AI platform publishes this data directly. Marketers approximate it through share of voice tracking, prompt polling tools, and keyword adoption rate estimates applied to existing SEO data.

Yes. The manual baseline method costs nothing. Define five to ten category prompts, run them monthly across ChatGPT, Perplexity, and Gemini, and record results in a spreadsheet. You can also set up a GA4 segment using a regex filter to track LLM referral sessions from known platforms. Paid tools add statistical reliability and scale, but the manual approach gives a usable starting point.

Traditional keyword volume is a logged, published count of how often a specific phrase is searched on a search engine. LLM search volume has no direct equivalent because AI platforms do not log or share query frequency data. LLM responses are also non-deterministic, meaning the same prompt returns different results on repeated runs, which makes stable pattern recognition much harder than with traditional search.

AI share of voice measures the percentage of AI-generated answers that mention your brand across a defined set of category queries. It is the closest practical proxy to keyword volume in an LLM context. Top-performing brands achieve 15% or more share of voice across their core query sets, with enterprise leaders in specialized verticals reaching 25 to 30%.

For small businesses with limited SEO resources, the manual prompt baseline and GA4 segment provide enough signal without paid tools. Focus first on organic rankings and brand presence. Invest in dedicated LLM tracking tools once organic performance is stable and you have a clear content strategy. The conversion quality of LLM traffic is high, but current volume is small enough that most small businesses can afford to delay paid tooling.

The main options include Profound (enterprise), OtterlyAI (marketing teams), Peec AI (content strategy), LLMRefs (SEO teams transitioning to GEO), Semrush AI Visibility Toolkit (existing Semrush users), SE Ranking AI Search Toolkit, and Mangools AI Search Watcher. Each tracks brand mentions and citations across platforms including ChatGPT, Perplexity, Gemini, and Google AI Overviews using a repeated-prompt polling model.

No AI platform publishes LLM query data. Learn what LLM search volume actually means, how to approximate it using free and p...

The higher education marketing funnel is structurally broken. This stage-by-stage guide covers GEO for AI search visibility,...

A complete guide to Reddit advertising in 2026. Learn campaign setup, ad format selection, subreddit targeting, native creat...