LLM search volume: What it is, how to track it, and whether it's worth your attention

No AI platform publishes LLM query data. Learn what LLM search volume actually means, how to approximate it using free and p...

Traffic is expensive, and attention is short. If your site does not convert, you pay more for the same pipeline.

This guide explains what CRO is, how to measure it, and how to run a repeatable program that improves outcomes like purchases, demo bookings, and qualified leads. You will also get a practical workflow, common pitfalls, and a modern tool stack.

Conversion rate optimization (CRO) is the disciplined practice of improving a website or funnel so more users complete a defined action, like buying, requesting a demo, or submitting a lead form. It combines measurement, user research, and controlled changes to increase outcomes without increasing traffic. CRO is not guesswork or random testing. It is a structured operating cadence for growth.

Ready to grow your organic traffic?

Get a free SEO audit from the Launchcodex team.

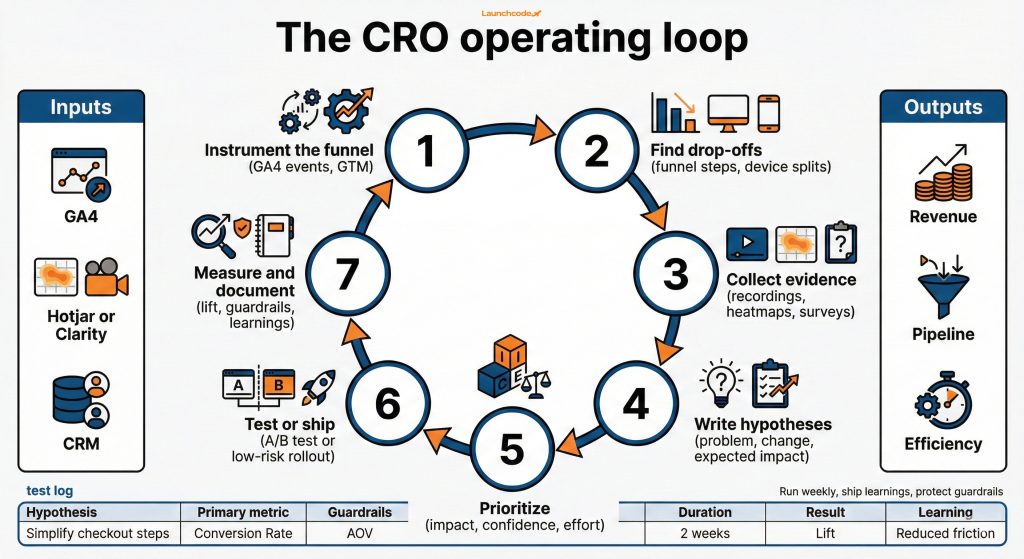

CRO works best when you treat it as an ongoing program, not a one-time redesign. That program should produce two outputs every cycle:

Conversions depend on your business model.

Use one macro conversion that maps to revenue, plus a small set of micro conversions that predict it.

Many teams frame CRO as a customer experience improvement effort, not just testing software. Contentsquare defines CRO as increasing the percentage of users who perform a desired action, and frames the work around analyzing journeys like landing page to checkout and iterating on experience.

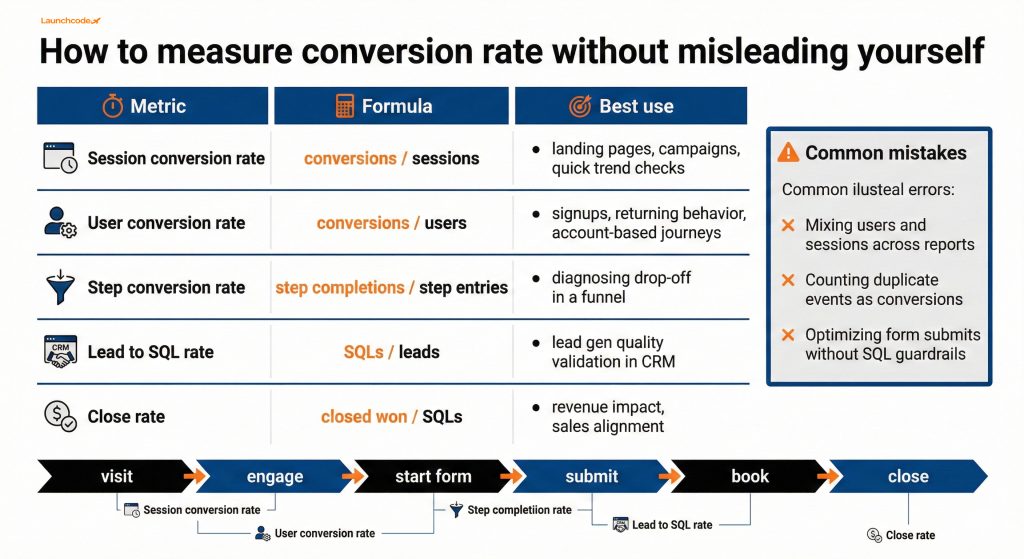

Conversion rate is simple math, but the denominator you choose changes decisions. Track conversion at the level you can control, like a landing page, a funnel step, or a campaign. Then use guardrail metrics to protect lead quality and downstream revenue. Your goal is more qualified outcomes per dollar and per hour, not a higher number on a dashboard.

Start with three layers of measurement:

Use one consistent set, then stick to it.

Pick one as your primary conversion rate metric and keep the others as diagnostic views.

Benchmarks help set expectations, but they are not targets.

For ecommerce, Shopify notes that average conversion rates often sit around 2.5% to 3%, with wide variation by device, channel, and price point. That is why your trend line matters more than a single benchmark.

For market movement, IRP Commerce reports that average ecommerce conversion rates can change year to year and month to month, including an 8.57% decline from 2.18% to 1.99% in December 2025 compared to December 2024. Use this as a reminder to avoid overreacting to short windows.

Use guardrails so you do not “win” the test and lose the business.

Jakob Nielsen makes a useful point in Nielsen Norman Group’s conversion rate guidance: conversion rate is a relative metric. You track it over time and interpret it with context.

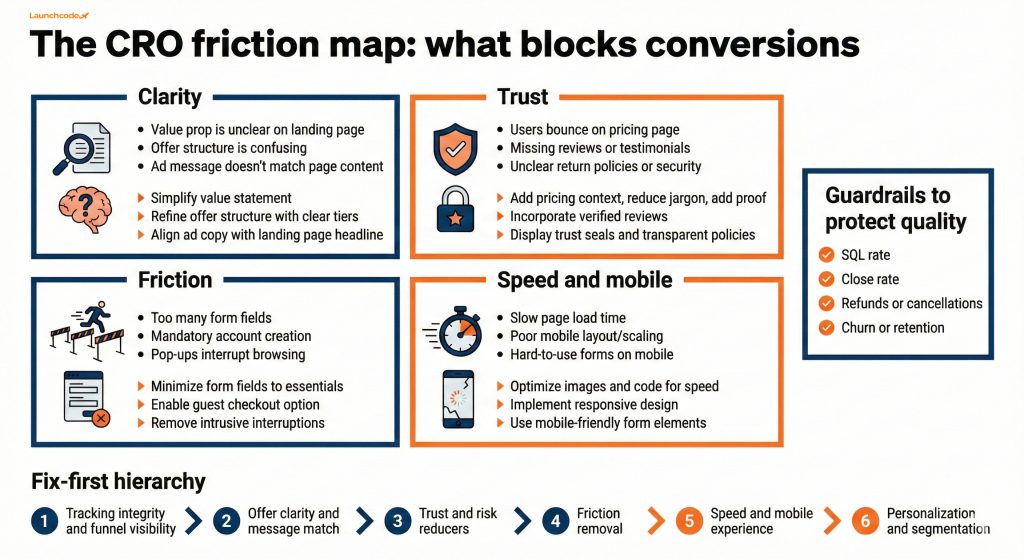

Most conversion lift comes from fundamentals: clarity, trust, friction removal, and speed. Teams often waste cycles chasing novel ideas when users simply do not understand the offer, do not trust the page, or hit unnecessary steps. Start with the highest leverage moments in your funnel, then fix obvious blockers before advanced segmentation or personalization.

If you run ecommerce, cart and checkout are often the highest ROI areas. Baymard Institute’s research summary reports an average documented cart abandonment rate of 70.22%, which shows how much revenue can sit in last-mile friction.

Treat these as a diagnostic menu.

For ecommerce specifically, Baymard’s cart abandonment research compilation lists common abandonment reasons such as extra costs being too high, delivery being too slow, low trust with credit card info, and forced account creation.

Start here when the backlog is huge.

A CRO program works when you run a tight loop: measure, investigate, prioritize, ship, and document learnings. Do not start with test ideas. Start with evidence, then build hypotheses that connect a specific user problem to a specific change and a measurable outcome. This improves your win rate and makes wins repeatable.

Use a lightweight operating cadence that a small team can run.

“Most CRO wins come from boring work: clean tracking, clear offers, and removing steps. If you cannot explain the friction in one sentence, do not test yet.”

Launchcodex Editorial Team, Author

Combine these sources:

Peep Laja argues for starting with conversion research that blends qualitative and quantitative inputs before you test, in Unbounce’s roundup of lessons from conversion experts.

Use a scoring model so you stop debating opinions.

Then rank the backlog. Focus on the tests with the biggest uplift potential first, which aligns with Peep Laja’s point about traffic being limited and prioritization being critical, also discussed in the same Unbounce expert roundup.

A/B testing is useful when you can isolate one change, run it cleanly, and measure a real outcome. Many teams get false winners because of small samples, broken tracking, or changing multiple variables at once. Treat experiments like production releases: define success and stop rules, QA tracking, monitor guardrails, and document learnings.

Start by picking the right change type:

Choose based on traffic and risk.

| Approach | Who it fits | Key strength | Watch out for |

|---|---|---|---|

| A/B test | Most teams | Clear causality for one change | Needs clean tracking and enough traffic |

| Multivariate test | High-traffic teams | Tests combinations of elements | Requires much more traffic to trust results |

| Ship and measure | Low-traffic B2B | Moves faster on obvious fixes | Harder to attribute without controls |

Run this before you call a test “live.”

For accessibility fundamentals, reference the Web Content Accessibility Guidelines (WCAG) overview for standards that also reduce friction for real users.

The strongest CRO programs connect on-page lifts to downstream outcomes like qualified pipeline, close rate, and retention. If you only measure a form submission rate, you can inflate leads while lowering quality. Build a measurement bridge from analytics to CRM or order data, then evaluate changes by revenue impact and guardrails, not only conversion rate.

Most guides stop at “form submit.” You need a closed-loop view.

“Any test that increases leads but lowers SQL rate is a loss. Your guardrails have to live in the CRM, not just in analytics.”

Derick Do, Chief Product Officer, Reviewer

Scenario:

Net result:

The change failed because you did not protect quality.

If you want a clear event-based analytics foundation, GA4 is a common starting point. If you want to unify analytics with CRM at scale, BigQuery becomes useful for joining events to revenue.

Tools do not create conversion lifts. They create visibility and control. The right stack depends on traffic volume, privacy constraints, and how fast your team can ship. A strong baseline is analytics plus session replay plus a test log. Add experimentation platforms when you can support QA, governance, and reliable measurement.

Start simple, then expand.

Use these when you have traffic and a mature process.

Hotjar’s customer story with The Good describes how they use Hotjar early in engagements to understand the customer journey after reviewing analytics, which supports the idea that behavior evidence should drive the backlog.

For the business case, Contentsquare’s Digital Experience Benchmark highlights that acquisition costs can rise while conversion rates drop, which makes CRO one of the fastest levers for improving ROI when media gets more expensive.

AI speeds up research synthesis, not judgment. Use AI to summarize session recordings, cluster survey responses, draft hypothesis options, and generate QA checklists. Do not use AI to declare winners, invent reasons for behavior, or replace measurement. AI should reduce cycle time while you keep human review on risk, privacy, and business context.

Use AI in three places:

Start by validating tracking, then pick one high-value funnel and run a 2-week sprint focused on evidence and shipping. You do not need a massive roadmap. You need a tight loop: measure, diagnose, prioritize, ship, learn. If you run this cadence consistently, you will improve conversion and reduce acquisition waste across SEO, paid media, and email.

A practical next step plan:

If you want a structured implementation approach that ties CRO into SEO, paid media, and automation systems, Launchcodex typically runs CRO as part of a full-funnel growth program connected to measurement and delivery on the services page.

A good conversion rate depends on your industry, device mix, traffic quality, and what you count as a conversion. Use benchmarks only for context, then track your own trend over time and focus on downstream quality metrics like SQL rate and close rate.

Start with measurement and research. Run A/B tests after you identify a clear friction point and can instrument outcomes and guardrails reliably. Otherwise you risk false winners and wasted cycles.

UX is the broader discipline of making experiences usable and accessible. CRO uses UX improvements plus measurement and experimentation to increase a defined business outcome like purchases or booked meetings.

Use a research-first approach, ship obvious fixes, and measure before and after with stable windows. Focus on high-impact pages, remove friction, improve clarity, and tie results to CRM outcomes rather than relying on small-sample A/B tests.

At minimum you need analytics, session replay or qualitative feedback, and a test log. Add experimentation platforms like Optimizely or VWO when you have enough traffic and a mature QA and measurement process.

No AI platform publishes LLM query data. Learn what LLM search volume actually means, how to approximate it using free and p...

The higher education marketing funnel is structurally broken. This stage-by-stage guide covers GEO for AI search visibility,...

A complete guide to Reddit advertising in 2026. Learn campaign setup, ad format selection, subreddit targeting, native creat...