Google Ads management: How to run, optimize, and scale your campaigns

Most Google Ads accounts waste 20 to 30 percent of budget on bad signals and irrelevant traffic. This guide covers conversio...

Most AI rollouts stall because the tool shows up, but the workflow stays the same. When people do not know what is allowed, what “good use” looks like, or how to avoid mistakes, they either stop using AI or use it quietly.

This guide shows you how to diagnose cultural resistance, build trust with practical guardrails, and run an adoption plan that improves cycle time, quality, and measurable outcomes like speed-to-lead and production efficiency.

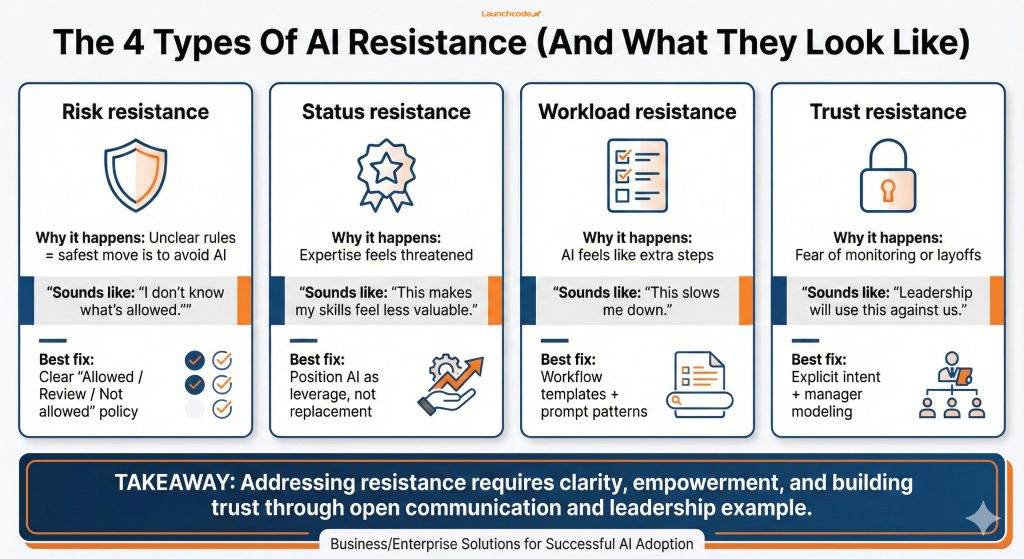

Cultural resistance shows up when AI threatens identity, increases risk, or adds effort without a clear personal win. People worry about looking inexperienced, leaking data, or getting blamed for low-quality output. Others assume AI is a leadership initiative that will be measured against them, not a tool that helps them deliver faster.

Resistance is usually a mix of:

AI adoption is already happening, but enablement and norms lag. Gallup reports that 47% of employees who use AI say their organization has not offered training on how to use it in their job, which makes “do nothing” the safest choice when policies are unclear. Read Gallup’s analysis in Your AI strategy will fail without a culture that supports it.

Microsoft research points to the same gap at scale. In the Microsoft Work Trend Index 2024, Microsoft reports 75% of global knowledge workers use generative AI at work, while only 39% of users report receiving company-provided AI training (as summarized in Microsoft’s Work Trend Index announcement).

Treat resistance like a system problem, not a personality problem:

That framing removes blame and makes action possible.

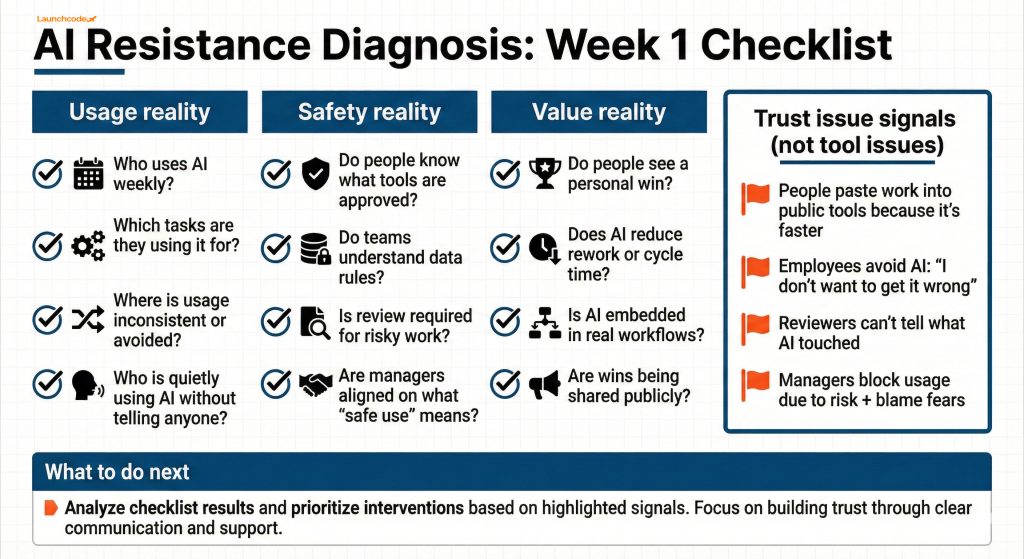

You diagnose resistance by watching behavior, not listening for complaints. Look for leading indicators like hidden usage, low repeat usage, manager pushback, and quality issues tied to untrained use. Change adoption tends to fail in the “people layer,” and Gartner notes that only 32% of leaders globally get employees to adopt changes in a healthy way, which makes early diagnosis a real advantage. See Gartner’s article on adopting change in the workplace.

Start with three checks:

Trust friction has patterns:

A global study from KPMG and the University of Melbourne found that 48% of employees have uploaded company information into public AI tools, and 57% admit using AI in non-transparent ways. That points to fear and governance gaps, not a lack of interest. See the PDF report: Trust, attitudes and use of AI: A global study 2025.

Build a one-page “resistance map” by function:

Use this to select pilots that feel helpful and safe, without triggering high-risk fear.

Trust grows when AI use feels safe, visible, and supported, with simple guardrails that reduce risk. Psychological safety matters because adoption requires people to try new behaviors in public. Amy Edmondson’s research links psychological safety to learning behavior in teams, which is exactly what AI adoption needs. See Edmondson’s paper: Psychological safety and learning behavior in work teams (PDF).

Here is what trust looks like in practice:

“Culture shifts when you remove fear and replace it with clear permissions and repeatable habits.”

Elijah Moore, Director of People & Culture

Use one consistent message across leadership and managers:

Tie this to outcomes people care about:

Keep guardrails short and concrete:

When policies are unclear, people either avoid AI or use it secretly. Both outcomes increase risk.

Role-based training works when you teach three workflows per role, not “how AI works.” Employees adopt when training matches daily tasks. When training is generic, people test AI once, get inconsistent results, then stop. Microsoft’s Work Trend Index also highlights the enablement gap, which is one reason adoption becomes uneven even in AI-forward teams.

Build role training around real workflows:

Teach three workflows per role end-to-end:

Run training as practice, not lecture:

Tool choice affects adoption because it affects safety. Common enterprise options include:

The goal is not “best model.” The goal is safe access, clear policies, and workflows that reduce mistakes. If people trust the setup, they use AI openly.

If you want a practical evaluation framework for tool fit by workflow and risk, see The best LLM for business owners: which AI chat should you use?.

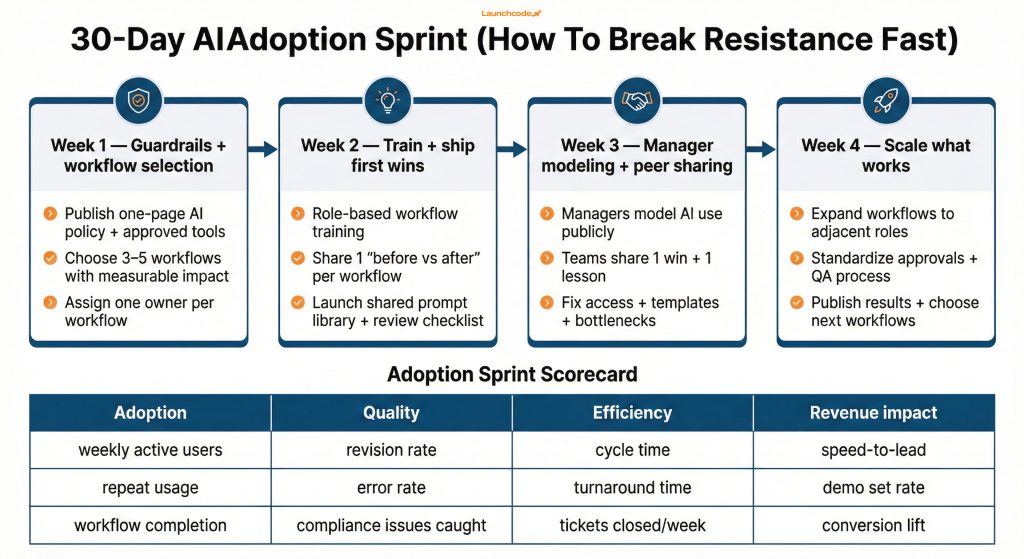

A 30-day adoption sprint works because it creates social proof and measurable value, while keeping risk controlled. You reduce resistance faster by shipping 3 to 5 workflow wins than by running one large “AI transformation” announcement. McKinsey reports that 88% of respondents say their organizations use AI in at least one business function, which means the difference now is not access. The difference is workflow change and operating discipline. See McKinsey’s State of AI.

Track adoption and business impact together:

| Metric type | What to measure | Why it matters |

|---|---|---|

| Adoption | weekly active users, repeat usage rate, workflow completion rate | shows if AI use is becoming normal |

| Quality | revision rate, error rate, compliance issues caught in review | prevents “fast but sloppy” output |

| Efficiency | cycle time, turnaround time, tickets closed per week | shows real workflow value |

| Revenue impact | speed-to-lead, demo set rate, content-to-lead conversion | ties adoption to business outcomes |

This prevents “AI activity” from becoming a vanity metric.

You stop shadow AI by removing the reasons people hide usage: unclear rules, unsafe tools, and fear of judgment. If you punish behavior without fixing the system, you drive AI usage underground and increase privacy and quality risk.

The KPMG and University of Melbourne study reports that 66% of employees rely on AI output without critically evaluating it, which makes hidden usage especially risky without verification habits and review standards.

“Adoption scales when AI sits inside the workflow, with guardrails and measurement that managers can actually run.”

Derick Do, Co-Founder & Chief Product Officer

Replace bans with controlled use:

You measure culture by tracking leading indicators that show whether AI is safe, normal, and useful. “Number of users” does not tell you if AI is changing work. It only tells you who clicked a button.

Use a measurement ladder:

Look for these each week:

If you want a structured way to link AI initiatives to measurable value, see The ROI of AI in 2026, where leaders capture value and how to start.

If you want resistance to drop, stop treating AI as a tool rollout and treat it as a workflow change program with trust built in. Start with guardrails, train role-based workflows, run a 30-day sprint, and measure repeat usage and business impact together.

Use this next step checklist:

If you need help operationalizing this in marketing, Launchcodex builds adoption sprints alongside workflow automation so AI becomes part of daily execution. Explore AI-powered marketing automation if your goal is measurable workflow lift, not just tool access.

Cultural resistance is when norms, fear, incentives, and trust issues block AI adoption. It shows up as avoidance, hidden usage, manager pushback, or inconsistent quality, even when tools are available.

Give managers a short playbook with workflows to model, safe language to use, and a clear definition of “good use.” Tie AI to team outcomes like cycle time and quality, then allocate time for coaching and sharing wins.

Shadow AI is when employees use AI tools without disclosure, often in public tools, because they fear punishment or lack approved options. It increases privacy risk and blocks learning because you cannot improve what you cannot see.

Role-based training works best. Teach three workflows per role, provide prompt templates, and require review checklists for outputs that carry brand, legal, or customer risk.

Track repeat usage and workflow-level adoption, then tie it to cycle time, rework, QA outcomes, and business metrics like speed-to-lead and pipeline velocity.

Most Google Ads accounts waste 20 to 30 percent of budget on bad signals and irrelevant traffic. This guide covers conversio...

Learn how Google's Universal Commerce Protocol (UCP) lets merchants sell inside AI Mode and Gemini. Covers feed eligibility,...

Google banned staff review quotas and employee name solicitation on April 17, 2026. Learn what changed, what's now prohibite...