9 ChatGPT ads creative formats

A format-by-format breakdown of all nine ChatGPT ad formats, from confirmed live placements to emerging types. Includes crea...

Boards and founders are done funding AI experiments that never leave the pilot stage. Surveys show that nearly nine out of ten organizations use AI in at least one function, yet only a minority report clear profit impact or enterprise scale.

This article focuses on where leaders are actually capturing AI ROI in 2026 and how to start in a disciplined way. You will see which functions show returns, why programs fail, how to build an AI ROI scorecard, and how to plan a first-year roadmap that your CFO and front line both support.

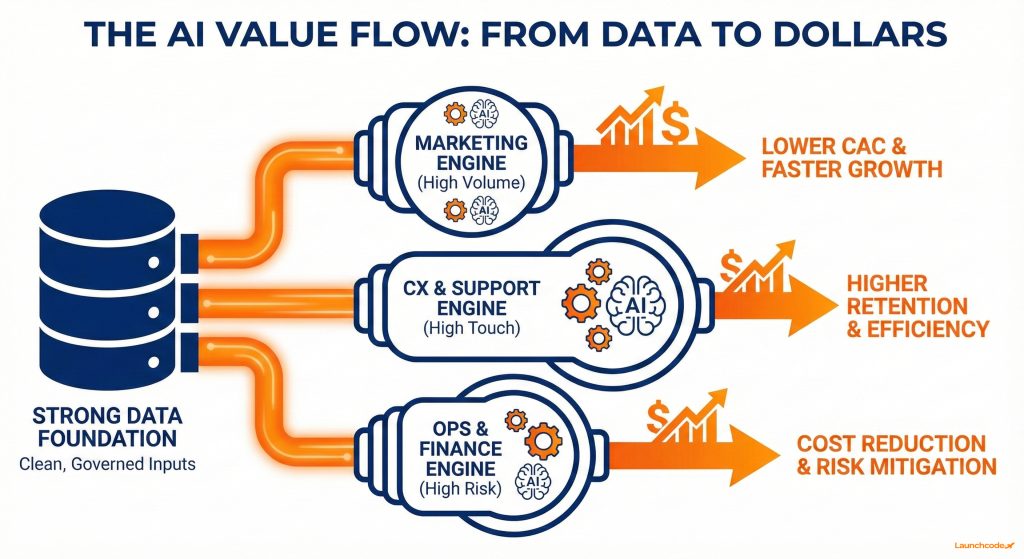

AI is producing the clearest ROI where workflows are high volume and data-rich, including marketing, customer support, sales development, operations, and finance. Leaders who target these zones report returns in the 30 to 40 percent range, with top performers reaching roughly 8 to 1 returns on AI spend.

Ready to grow your organic traffic?

Get a free SEO audit from the Launchcodex team.

McKinsey reports that 88 percent of organizations now use AI in at least one function, yet only 39 percent see impact at the profit level. The difference is where they apply it and whether the work connects to measurable outcomes.

Marketing teams see ROI fastest when AI runs inside structured workflows instead of ad hoc prompting. Examples include:

Google Cloud reports that 74 percent of companies using AI see a positive return within the first year, with early generative AI adopters averaging about 41 percent ROI. For marketing leaders, results often show up as lower cost per lead, faster launch cycles, and stronger long tail coverage.

“AI does its best work when it plugs into real demand signals and existing data, not when it sits off to the side.”

Derick Do, Co-Founder and Chief Product Officer

Support teams benefit from AI agents that triage tickets, handle simple issues, and coach human agents with suggestions and knowledge lookups.

Common ROI drivers:

Surveys from Google Cloud highlight support automation as one of the most measurable ROI use cases. Value grows when AI connects to CRM, knowledge bases, and escalation rules instead of operating as a standalone chatbot.

Operations and finance leaders measure ROI through cost and risk outcomes. AI improves demand forecasting, workforce planning, invoice processing, and anomaly detection.

Citizens reports that middle market companies see an average realized ROI of 35 percent from AI and automation. Many CFOs set hurdle rates of 41 percent or more, which pushes teams to scope realistic business cases.

Example applications for multi-location businesses include staffing plans based on appointment forecasts and automated anomaly checks instead of manual line-by-line reviews.

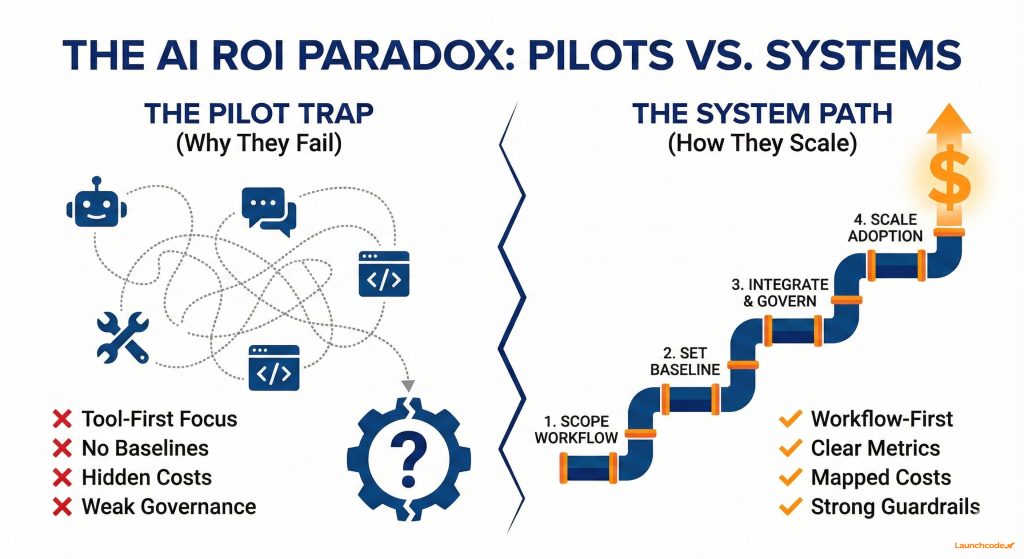

Most AI programs miss targets because they skip scoping, baselines, and scale planning. Leaders chase tools, underestimate integration cost, and ignore adoption and governance. The issue is design, not the underlying models.

Deloitte describes this as the AI ROI paradox. Around 85 percent of organizations increased AI spending in the prior year, and 91 percent plan to increase it again, yet only a minority can reliably measure returns. IBM-related surveys show that only about 25 percent of AI initiatives deliver the expected ROI.

Teams often repeat predictable mistakes:

These patterns explain why organizations report innovation and customer satisfaction gains before seeing full profit impact.

To move beyond pilots, treat AI as part of an operating system. Combine models with:

This approach reduces rework, improves adoption, and creates predictable value.

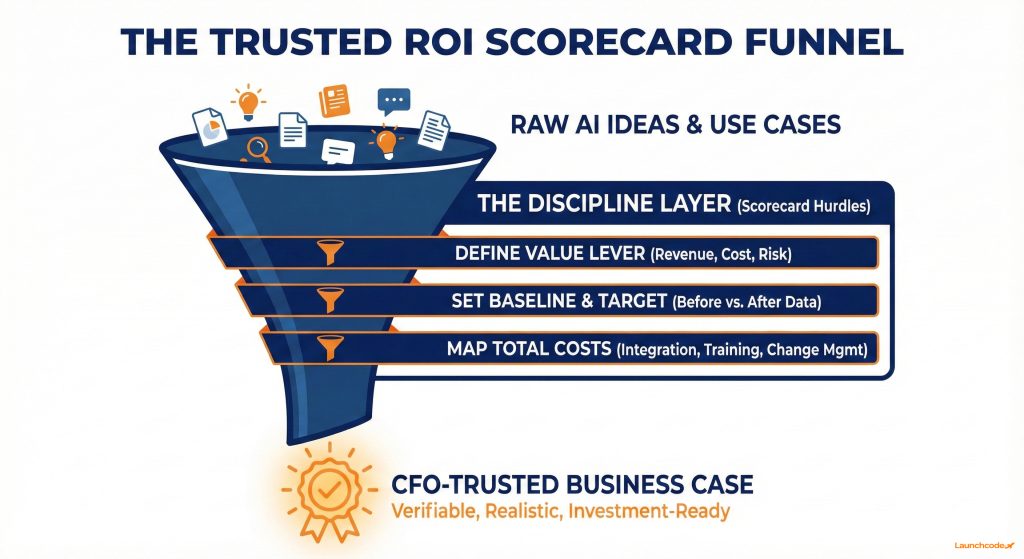

An effective AI ROI scorecard links each use case to one core value lever, a small set of metrics, and a clear payback window. It also exposes hidden costs, which prevents inflated projections and weak business cases.

IDC research supported by Microsoft shows average organizations generate about 3.5 dollars for every 1 dollar invested in AI, while top performers reach roughly 8 to 1. A disciplined scorecard is what separates the two.

Create one standard template that every initiative must pass.

| Use case | Value lever | Primary metric | Baseline | Target after 12 months | Time horizon | Notes |

|---|---|---|---|---|---|---|

| AI assisted content operations | Cost and revenue | Articles shipped per month | 20 | 40 | 12 months | Maintain or improve organic traffic |

| AI powered support triage | Cost and CX | Average handle time | 8 minutes | 5 minutes | 9 months | Keep CSAT at or above current level |

| AI enhanced local SEO and GEO | Revenue | Calls or bookings per month | 300 | 420 | 12 months | Focus on top ten locations |

| AI driven invoice processing | Cost and risk | Cost per invoice | 5 dollars | 3 dollars | 18 months | Reduce error rate by 50 percent |

You can connect this scorecard to dashboards in tools such as GA4 and Looker Studio to keep performance visible.

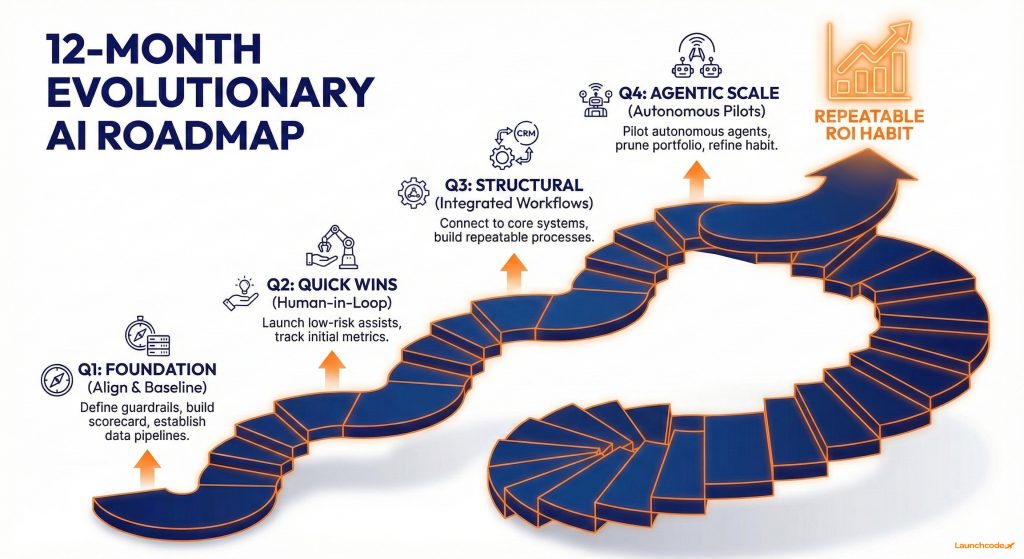

Real ROI in 2026 comes from a portfolio of AI use cases. Mix quick wins, structural bets, and agentic experiments. Review results quarterly, prune what does not work, and scale what does.

Wavestone notes that 70 percent of organizations place AI at the center of strategy and AI now represents about 13 percent of IT budgets, yet only 46 percent have an ROI framework. A portfolio approach closes that gap.

Organize your portfolio into three buckets.

Aim for a mix where quick wins earn confidence while structural and agent-driven workflows build long-term advantage.

Score each potential use case across four factors:

Shortlist use cases that are high impact, feasible, and manageable from a risk perspective.

Sustained AI ROI depends on clean data, clear governance, and AI-fluent teams. Without these foundations, even strong use cases stall or introduce new risk.

Snowflake reports that organizations with mature data practices see an average ROI of 41 percent from generative AI. The difference comes from structure, not only tools.

Focus on a small set of fundamentals.

ROI depends on how teams work, not just what tools you select. Shadow AI grows when employees lack approved workflows.

Practical steps:

A one-year roadmap should combine a short list of use cases, a shared scorecard, and a cadence of build, measure, and refine. The objective is to make AI ROI a habit across functions.

Deloitte notes that structural changes can take two to four years to reach full value. Quick wins still appear inside the first twelve months when work is scoped clearly.

“ROI improves when teams learn from every build cycle and refine both the workflow and the scorecard.”

Tanner Medina, Co-Founder and Chief Growth Officer

Leaders who treat AI ROI as an ongoing discipline build compounding advantage. They keep a live portfolio, refine scorecards, and ground decisions in measurable outcomes.

Budget pressure and regulation will tighten, which makes discipline an advantage. Many organizations talk about AI strategy, yet operate without a working ROI framework.

If you focus on high-volume workflows, build a simple scorecard, manage a portfolio, and follow a 12-month roadmap, you will be ahead of many peers. Strong governance, clear data, and frontline enablement convert AI from experiments into reliable value.

For teams that want structured help across AI automation, SEO, GEO, and measurement, Launchcodex brings tested workflows and reporting practices that make AI ROI repeatable.

For quick win use cases such as AI-assisted content, support agent assist, or email drafting, many organizations see impact in six to twelve months. Larger workflow redesign and agent-driven programs often take one to three years to reach full value.

Research suggests average teams see roughly 3.5 dollars in value for every 1 dollar invested, while leaders reach 8 to 1. A practical target is 30 to 40 percent ROI in year one for well-scoped use cases, with upside as data and governance improve.

Start with workflow enhancements inside tools you already use. Consider agentic AI once processes and data flows are stable and there is a clear multi-step workflow that benefits from automation with approvals.

Use frameworks such as NIST AI RMF during design. Classify use cases by risk, keep human oversight on high-stakes decisions, involve legal and security early, and log activity. Strong risk practices protect ROI by preventing rework, fines, and reputation damage.

Provide approved tools for common workflows, share practical playbooks, and make it easy to request new use cases. Clear policies and effective tools reduce shadow AI over time.

A format-by-format breakdown of all nine ChatGPT ad formats, from confirmed live placements to emerging types. Includes crea...

ChatGPT launched ads on February 9, 2026. This guide covers how they work, who sees them, what they cost, how to track perfo...

AI tools now influence provider choice as much as referrals do. Learn how patient search has split into two lanes and what y...