How to brief performance creative: A template your agency can use

Most creative briefs waste budget. This guide gives you a complete performance creative brief template for paid social and p...

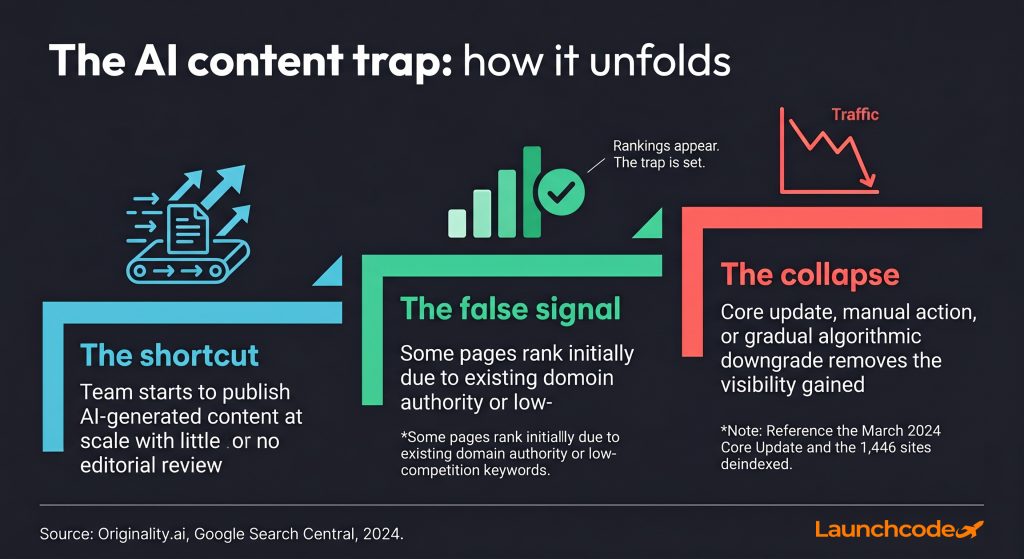

Thousands of businesses published AI content expecting faster results. Many saw early traction, then watched their rankings evaporate. Some were deindexed entirely. The pattern is consistent enough that it now has a name: the AI content trap.

This article explains why it happens, what the data says, and what a better approach looks like. If your content output has gone up but your traffic has stayed flat or fallen, you are likely caught in it.

The AI content trap is the belief that publishing more AI-generated content will compound into more traffic. In practice, it compounds into a weaker domain. AI writes fast and cheaply, which makes it tempting to scale. But Google's systems, and increasingly AI systems themselves, reward genuine expertise and penalize content that exists primarily to occupy search positions. Publishing volume without quality is not neutral. It works against you.

Ready to grow your organic traffic?

Get a free SEO audit from the Launchcodex team.

Most teams fall into this trap for a logical reason. AI reduces the time and cost of producing a blog post by a significant margin. When early articles rank or generate impressions, it feels like the strategy is working. The problem surfaces over time, or all at once after a core update.

The trap has three stages:

By stage three, the team has often published dozens or hundreds of pages. Recovering them is harder than building correctly from the start.

Search Engine Land identified this pattern directly: fast drafts do not equal fast publishing. Most teams still have to humanize, fact-check, and restructure AI outputs. That edit burden, combined with the risk of hallucinations and generic phrasing, kills ROI and invites quality penalties after major updates. The teams that skip the editing step are not saving time. They are deferring the cost until a much worse moment.

The economic math matters. The cost of producing content has fallen close to zero with AI. But the cost of being seen has never been higher. More content is competing for fewer clicks in a search environment where AI Overviews now absorb a growing share of informational queries. Publishing AI content into that environment without differentiation adds to the noise.

Google does not automatically penalize content because AI wrote it. Its official policy is clear: using AI to generate content with the primary purpose of manipulating rankings violates spam policies, but not all AI-assisted content is spam. The problem is not the tool. It is the intent and quality of the output. Google's systems, including SpamBrain, now detect patterns of low-value automated publishing at domain level, not just page level.

This distinction matters because many marketers read Google's policy as blanket permission. It is not. The threshold is whether the content genuinely helps users or exists to occupy search positions. Purely AI-generated content fails that test at scale almost every time.

Google updated its quality rater instructions in 2025 to direct raters to give the lowest ratings to pages where most main content is auto-generated or AI-generated. This is not an algorithm signal. It is a human signal that trains the algorithm. The direction of travel is clear.

The clearest evidence of real consequences is documented. Google's March 2024 Core Update targeted scaled content abuse with a stated goal of reducing unhelpful content by 40%. At least 1,446 websites received manual actions and were completely deindexed. Analysis of those sites found the vast majority were likely using AI content based on automated detection scores.

The pattern across deindexed sites was consistent: high AI detection scores, no original research or data, generic structure, and aggressive publishing velocity. Sites with 90% or more unedited AI content experienced deindexing within three to six months of launch. In many cases, traffic dropped to zero overnight.

SE Ranking ran a controlled experiment along the same lines. They built 20 new websites populated entirely with AI-generated content. The sites appeared to perform initially. Then all keyword rankings were lost suddenly in February 2025. The early traction was not a sign of success. It was a false signal before the correction.

Google's spam policy, updated in March 2024 and again in March 2025, explicitly names scaled content abuse as a violation. This covers large volumes of AI or automated content created primarily to manipulate search rankings. It applies regardless of whether the content is technically well-written. Volume at the expense of value is the violation.

Three behaviors trigger the highest risk:

E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness. Google added the first "E" for Experience in 2022 specifically to weight content from people who have done the thing they are writing about. AI cannot produce first-hand experience. It can produce plausible-sounding text about first-hand experience, which is precisely what Google's quality raters are trained to distinguish.

John Mueller, Google's Search Advocate, has said directly: "You can't sprinkle some experiences on your web pages." Adding an author bio, a credentials block, or a disclosure note does not produce E-E-A-T. It has to come through the content itself, through specific details, documented processes, corrected mistakes, and real client outcomes.

AI content almost never carries those signals because it is trained on general information. It produces the average of what has been written before. A page that describes SEO strategy in accurate but generic terms is not the same as a page that documents what happened when a specific technical change was deployed to a specific site and measured over 90 days.

In high-stakes verticals, the gap is larger. Google's Quality Rater Guidelines identify YMYL topics (Your Money or Your Life) as areas where content inaccuracies could cause real harm. These include finance, health, and legal topics, as well as any content that affects major decisions. In these verticals, the E-E-A-T bar is highest and AI content faces the steepest ranking penalties.

Mueller has framed the YMYL question this way: would a careful person seek out experts or highly trusted sources before acting on this content? If yes, the content needs genuine expert input. AI cannot meet that bar alone.

Pages with strong E-E-A-T signals had a 30% higher chance of ranking in the top three positions compared to weak-signal pages, based on Semrush's 2024 study. After the December 2025 Core Update, generic content farms dropped sharply while sites demonstrating real experience and expertise saw 23% gains. That divergence is the trend, not an outlier.

Strong E-E-A-T is demonstrated, not claimed. Concrete indicators include:

Generic AI content hits none of those signals consistently. A human-led workflow where a subject matter expert reviews, adds context, and contributes first-hand perspective hits most of them.

"The moment we stopped asking 'did AI write this?' and started asking 'does this reflect what we actually do for clients?' our content started performing differently. Google rewards specificity. Specificity comes from people, not prompts."

Tanner Medina, Co-Founder and Chief Growth Officer, Launchcodex

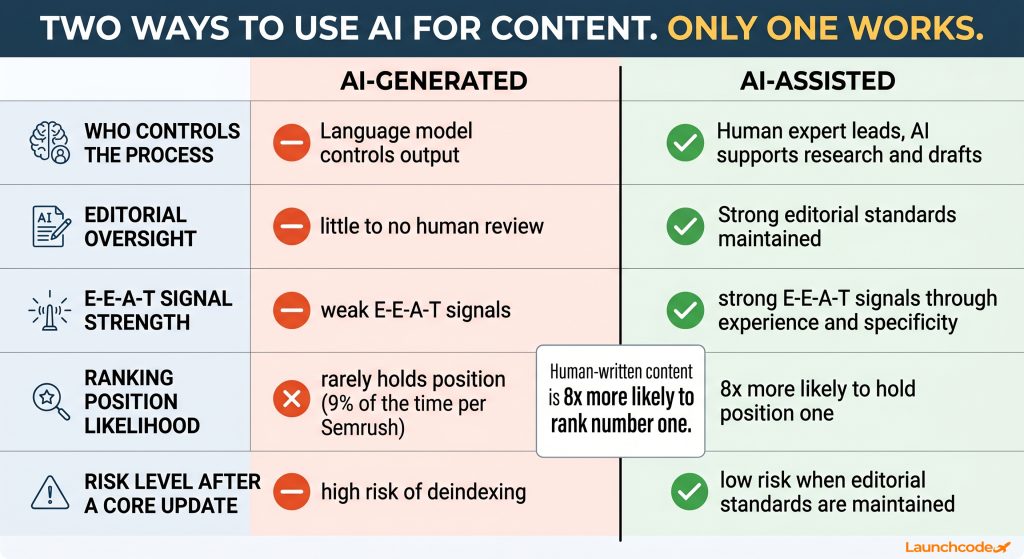

The ranking data from controlled studies is clear. Human-written content is 8 times more likely than purely AI-generated content to hold the number one position on Google. In a Semrush analysis of 42,000 blog pages from November 2025, content classified as purely AI-generated appeared in the top spot just 9% of the time. Human-written content held position one 80% of the time. The gap narrows at lower positions, but the premium for human authorship at the top is decisive.

This finding matters for one specific reason. Most teams measure AI content performance by whether pages land on page one. That threshold hides the problem. AI content does reach page one at a reasonable rate. The separation happens at position one versus position three versus position eight. At the exact positions where click-through rates are highest, human content dominates.

A site optimizing for top-three positions needs human-led content. A site filling lower positions with AI content is building a floor, not a ceiling.

AI content often ranks at launch because the parent domain has existing authority. That authority acts as a temporary buffer. Over time, as the domain accumulates more AI-generated pages with weak engagement signals and low dwell time, the authority buffer erodes. The domain becomes associated with low-value content across topical clusters, not just on individual pages.

By November 2024, AI-generated articles had surpassed human-written articles as a share of all new web content published, reaching 50.3% of new articles according to Graphite's Common Crawl analysis. Before ChatGPT launched in November 2022, that share was just 5%. Despite that volume, Graphite confirmed that AI-generated pages largely do not appear in Google search results. The content is being produced. It is not being seen.

Publishing AI content is already risky. Publishing it into a declining click environment compounds the problem. Organic CTR for queries where an AI Overview is present dropped 61% year over year, from 1.76% down to 0.61%, based on Seer Interactive's 15-month study tracking 25.1 million impressions across 42 organizations. Even queries without AI Overviews saw CTR drop 41% over the same period.

Low-quality AI content competing for informational queries in a zero-click environment is a losing position. The traffic opportunity for undifferentiated informational content is shrinking. The requirement to stand out is rising. For a broader look at how the March 2026 core update is accelerating this shift, see our analysis of what changed and how to adapt.

AI-generated content is output that comes primarily from a language model, with a human doing light editing or none. AI-assisted content uses AI for research, outlining, drafting, and optimization, with a human expert owning the final structure, insight, and quality. That distinction is the difference between the trap and the solution. The same tools produce very different results depending on who controls the process.

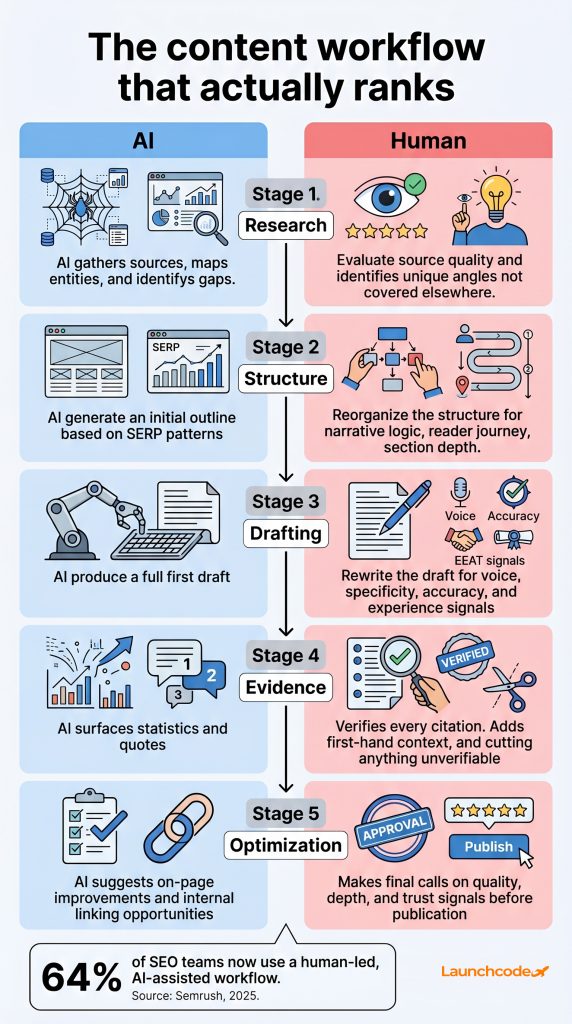

Most professional SEO teams have already arrived at this conclusion. Semrush's 2025 survey of 224 SEO professionals found that 64% use a human-led, AI-assisted workflow. Only 23% create content without AI entirely. And 87% of teams report that humans remain directly involved in production and editing. The industry standard is human in the lead role, AI as a production tool.

The human-led, AI-assisted model works best when roles are clearly separated:

| Role | AI handles | Human handles |

|---|---|---|

| Research | Source gathering, entity identification, gap analysis | Evaluating source quality, identifying unique angles |

| Structure | Initial outline based on SERP patterns | Reorganizing for narrative logic and reader journey |

| Drafting | First draft with full sections | Rewriting for voice, specificity, and accuracy |

| Evidence | Surfacing statistics and quotes | Verifying citations and adding first-hand context |

| Optimization | On-page suggestions, internal linking | Final judgment on quality, depth, and trust signals |

This model produces content that ranks because it carries the speed advantages of AI on research and structure while preserving the experience signals that Google rewards.

The risk threshold sits around editorial oversight, not AI percentage. Based on observed data from the March 2024 update, sites keeping AI-authored content below roughly 30% of total output and applying consistent editorial review minimized penalties. Sites at 80% or more unedited AI content faced the highest deindexing rates.

The specific risks that accumulate with unedited AI output are:

"AI is the fastest way to build scaffolding. But scaffolding is not the building. The teams that publish the scaffold and call it done are the ones we see rebuilding from scratch six months later."

Derick Do, Co-Founder and Chief Product Officer, Launchcodex

The same quality signals that protect rankings in traditional search also determine whether AI Overviews and systems like ChatGPT and Perplexity cite your content. Being cited in Google's AI Overviews produces a 35% CTR lift compared to uncited brands, based on Seer Interactive's data. The goal is not to avoid the AI content trap and do nothing. It is to build content that earns citations from the AI systems absorbing clicks.

Generative engine optimization (GEO) is the practice of structuring content so it is selected as a source by AI-powered answer systems. The requirements for GEO overlap almost exactly with the requirements for strong E-E-A-T: clarity, authority, original insight, and verifiable claims.

AI systems extract value from content structured for synthesis. Several content characteristics increase citation likelihood:

Domains with strong referring domain profiles and established topical authority are consistently favored for AI Overview citations. SE Ranking's data found that sites with over 32,000 referring domains are 3.5 times more likely to be cited by ChatGPT than sites with minimal link profiles. The authority signals that matter for traditional SEO are the same ones that determine AI citation rates.

A domain that builds content with genuine expertise, earns backlinks from credible sources, gets cited in AI Overviews, and maintains strong engagement metrics becomes harder to displace over time. That compounding dynamic does not exist for sites built on AI content volume. The traffic gains from AI publishing are borrowed against future algorithm corrections, not built on durable signals.

At Launchcodex, our SEO and GEO engagements start from the same foundation: content that serves a specific reader, cites specific data, and is reviewed by practitioners with direct experience in the subject. That is the approach the data supports.

If your site has published significant AI content, the path forward is an audit before more publishing. The goal of the audit is to identify which pages are worth recovering with editorial investment, which need to be redirected, and which should be removed. Publishing more AI content on top of existing AI content does not dilute the problem. It deepens it.

A structured recovery approach works in three phases.

If pages are failing to appear in search entirely, the problem may extend beyond content quality to indexing. Our guide to common Google indexing issues covers how to diagnose and fix them.

Categorize each flagged page into one of three buckets:

After triage, do not return to the same workflow that created the problem. Establish clear production standards:

Publishing AI content without a human in the loop is not a content strategy. It is a liability that compounds quietly until a core update makes it visible all at once. The data is consistent: purely AI-generated content rarely holds top positions, sites that rely on it heavily face deindexing, and the same search systems that penalize AI farms actively reward content that demonstrates real expertise.

The practical shift comes down to five decisions:

AI belongs in your content workflow. It does not belong in the driver's seat. Research, outlining, and first drafts are legitimate uses. Final judgment, expert perspective, and quality accountability belong to a person.

No. Google's official stance is that AI-generated content is not penalized just because AI produced it. What triggers penalties is content that exists primarily to manipulate rankings rather than help users. The problem with most AI content is not its origin. It is that it lacks the specificity, experience signals, and original value that Google rewards.

At least 1,446 sites received manual actions and were completely deindexed. Analysis found the majority were relying heavily on AI-generated content. Google's SpamBrain system was updated to detect patterns of scaled automated publishing at domain level, not just on individual pages.

AI-generated content is primarily produced by a language model with minimal human editing. AI-assisted content uses AI for research, outlining, and first drafts, with a human expert owning structure, voice, insight, and quality review. The second approach produces content that can carry genuine E-E-A-T signals. The first generally cannot.

E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness. Google added Experience to the framework in 2022 to weight content from people with first-hand knowledge of the subject. AI cannot produce real first-hand experience. It can produce text that sounds like it comes from experience, which quality raters are specifically trained to evaluate against actual experience signals.

GEO, or generative engine optimization, is the practice of optimizing content to be cited by AI-powered search systems like Google's AI Overviews, ChatGPT, and Perplexity. The content qualities that earn citations, including authority, original data, clear structure, and credible sourcing, are the same qualities that protect against AI content penalties. Solving the AI content trap and building for GEO are the same objective. For a deeper comparison of how SEO, GEO, AEO, and AIO differ, see our full breakdown.

Start with an audit. Identify which pages are losing impressions or clicks. Run AI detection to find your highest-risk pages. Then triage: recover high-potential pages with expert editorial investment, redirect duplicates, and remove content with no viable audience. Do not publish more AI content before establishing a new workflow with human review at every stage.

Most creative briefs waste budget. This guide gives you a complete performance creative brief template for paid social and p...

Learn how to build a 4-layer AI reporting workflow that automates data collection, generates AI insight summaries, and deliv...

Learn which CRM tasks to automate and which to keep human. A three-tier framework for faster lead follow-up, smarter scoring...