9 ChatGPT ads creative formats

A format-by-format breakdown of all nine ChatGPT ad formats, from confirmed live placements to emerging types. Includes crea...

GEO services are easy to pitch and hard to verify. Many agencies promise AI visibility but cannot explain how they measure citations or what they will change on your site.

This guide gives you a practical scorecard, proof standards, and the exact questions to ask so you can choose a GEO partner that can measure results and ship work that moves revenue.

A strong GEO agency runs controlled visibility tests across AI surfaces, improves your entity and content coverage, and ships technical changes that make your site easier to cite. A weak GEO agency sells “AI optimization” but cannot define success, show prior evidence, or explain what they will implement on your site. Treat this as a vendor validation exercise with clear pass fail criteria.

Ready to grow your organic traffic?

Get a free SEO audit from the Launchcodex team.

GEO focuses on inclusion in AI-generated answers such as Google AI Overviews, ChatGPT responses, and Perplexity citations. Your progress shows up as measurable mentions and citations tied to specific queries.

Your success often appears as:

If you need a shared definition, use the academic framing from the paper that introduced Generative Engine Optimization and reported measurable visibility gains in controlled tests, up to 40 percent in their experiments (arXiv GEO paper).

Avoid agencies that define GEO in these ways:

Platforms change frequently. Query results vary by location, device, and prompt wording. A credible agency will say that clearly.

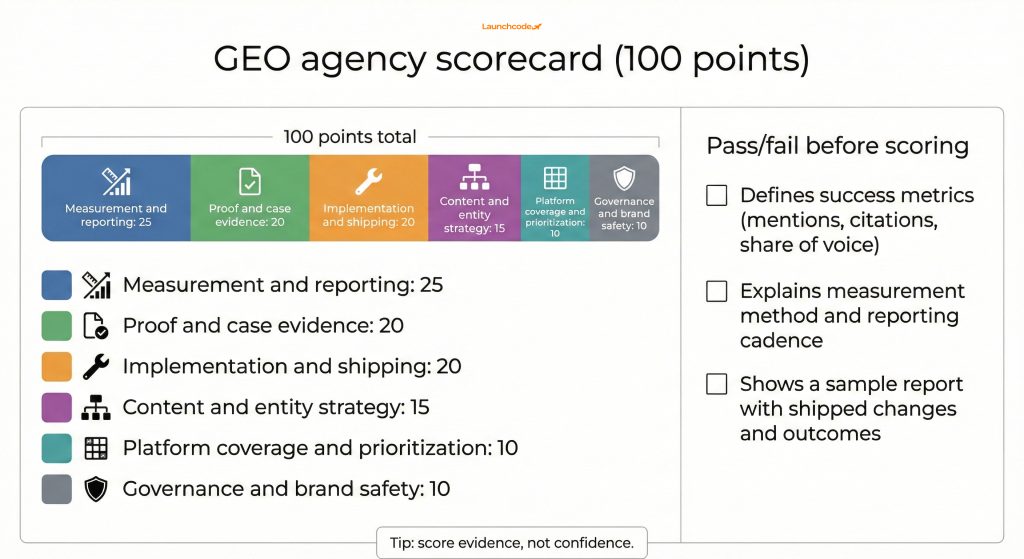

The most reliable way to compare GEO agencies is a weighted scorecard that forces specific evidence. Weight measurement and proof higher than claims. Weight shipping capability higher than strategy decks. A scorecard also helps your CFO or leadership team understand why you selected a vendor.

Use a 100 point model so you can rank agencies quickly.

| Category | Weight | What good looks like | What to watch out for |

|---|---|---|---|

| Measurement and reporting | 25 | Fixed query sets, citation tracking, monthly share of voice, clear definitions | “We will report AI visibility” with no method |

| Proof and case evidence | 20 | Before and after examples tied to a query set, dates, screenshots, change log | Case studies with no evidence or only rankings |

| Implementation and shipping | 20 | Technical plus content plus data capability, clear backlog, weekly ship cadence | Strategy only or advisory deliverables |

| Content and entity strategy | 15 | Entity map, topic coverage plan, page brief system, QA process | Generic content production packages |

| Platform coverage and prioritization | 10 | Clear focus on the surfaces that matter for your category | “We do everything” with no priorities |

| Governance and brand safety | 10 | Accuracy controls, review workflow, escalation process | No process for brand misrepresentation |

Fail the agency early if they cannot do these three things:

“Most failed GEO engagements trace back to weak measurement definitions. If you cannot define the metric, you cannot manage the outcome.”

Tanner Medina, Co-Founder and Chief Growth Officer

If they pass these checks, then score them.

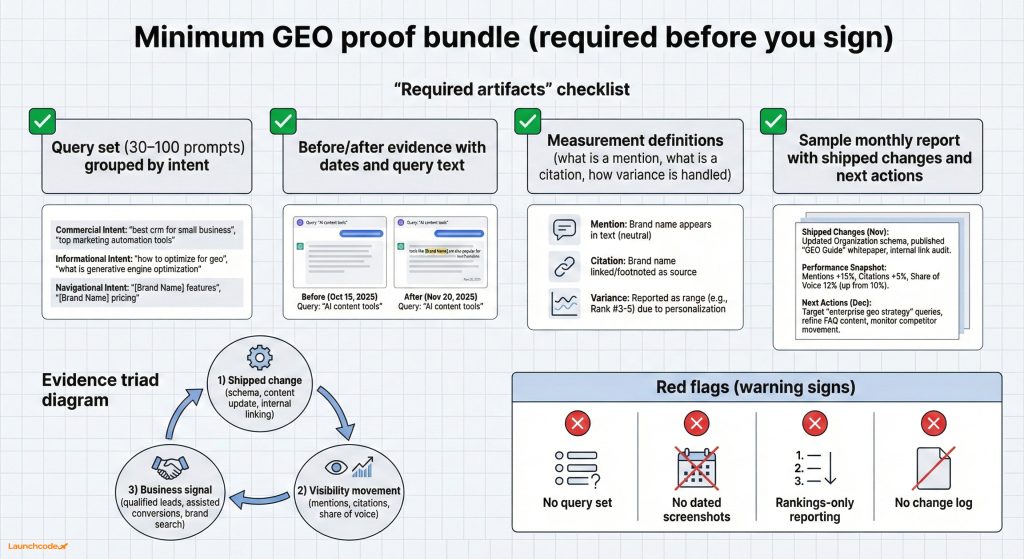

You should not select a GEO agency without a minimum proof bundle. The bundle proves they can measure, execute, and explain results. Without it, you are buying a narrative. A proof bundle also reduces risk because you can audit their work if performance stalls.

Request these items during selection, not after onboarding.

Evidence should connect three elements:

This connection matters because top rankings do not always translate to citations. Ahrefs analyzed AI Overviews and found that even pages ranking first appear among the top cited links only about half the time in their sample (Ahrefs citation research).

GEO success is difficult to evaluate if reporting is weak. Choose an agency that measures how AI answers change over time using a controlled query set and consistent sampling. Reporting should also connect visibility to funnel metrics because AI answers often reduce clicks while awareness rises.

At minimum, expect:

When AI summaries appear, users click less often. Pew Research analyzed tens of thousands of searches and documented reduced click behavior when AI summaries appear on results pages (Pew Research AI summaries and clicks).

Your agency should reflect this reality. If they only promise more organic traffic without AI visibility metrics, they are not running a full GEO program.

Ask which tools they use for each layer:

The goal is not the tool brand. The goal is whether they can explain how each tool supports measurement and execution.

Many GEO engagements fail because the agency cannot execute across content, development, and data. GEO requires implementation, not advice. Choose agencies that can produce a prioritized backlog, ship weekly improvements, and document changes so results are attributable.

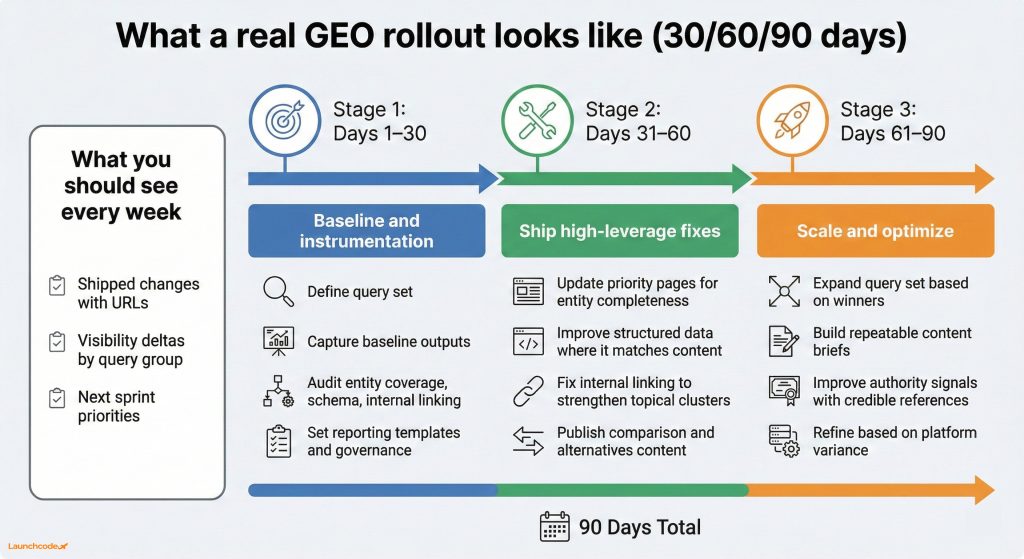

A practical 30, 60, 90 plan should include:

If they cannot answer with specifics, expect slow progress.

If your internal team cannot ship development work quickly, choose an agency that can implement or coordinate implementation. Recommendation-only engagements often stall. For a structured vendor evaluation model, adapt a classic SEO RFP process and apply GEO-specific proof requirements.

GEO performance improves when your site answers the full set of related questions a model expects, using clear entities and supporting evidence. Agencies that focus on content volume often miss entity coverage, source quality, and internal linking. Choose an agency that can build a coverage map and a briefing system.

For a B2B SaaS example, strong coverage includes:

This structure helps both human readers and retrieval systems. It also reduces hallucination risk because your pages contain clear, citable facts.

If the agency cannot describe a QA workflow, expect inconsistent outputs.

A credible GEO agency does not optimize every platform equally. They select the surfaces that matter for your buyers and map your query set to those surfaces. Platform prioritization should be explicit and tied to demand patterns.

Use your buyer journey as the filter.

Semrush reported AI Overviews appearing across a meaningful share of queries in its large scale study, which supports treating this as an active channel (Semrush AI Overviews study).

If the agency cannot describe a testing protocol, their results will be difficult to trust.

GEO influences how your brand appears inside answers you do not control. A serious agency uses governance, review workflows, and accuracy standards to reduce misrepresentation risk. This is critical for regulated industries and any brand that depends on trust.

“Delivery quality depends on process discipline. Clear review steps and change logs prevent small errors from becoming client issues.”

Brittany Charles, SVP, Client Services

Do not let agencies oversell emerging standards. For example, llms.txt is a proposed convention to help LLMs interpret site content at inference time, but adoption varies and it is not a guaranteed lever (llms.txt proposal). Treat it as a due diligence question.

You can shortlist GEO agencies quickly with a structured process: align goals, collect comparable artifacts, score with weights, then run a proof review call. This removes demo bias and forces real evidence. Most teams can complete this in about one week with two stakeholders.

A practical GEO engagement combines measurement, shipping, and a repeatable content system. You need clear visibility baselines, a prioritized backlog, and reporting that connects AI presence to pipeline. Controlled query sets and disciplined implementation cycles create the most reliable progress.

Launchcodex treats GEO as part of a full funnel search system, combining technical execution, content development, and data instrumentation so teams can attribute outcomes and iterate. For related context, review:

Select three agencies, request the same proof bundle, and score them with weights that favor measurement and execution. Your next step is a proof review call where the agency must walk through a real before and after example tied to a fixed query set. Clear evidence indicates a strong pilot candidate.

If you want alignment with your existing search program, review your current stack and confirm the agency can operate within it. For related support, see:

Use a weighted scorecard and require a proof bundle from every agency. If the bundle is missing, disqualify them.

Expect a query set, baseline captures, an entity and schema focused audit, a prioritized backlog, and a reporting template with metric definitions.

No. Platform outputs vary by query and user context. Agencies should focus on controllable actions, measurement, and iteration.

They should define mention and citation, explain how they sample prompts, and provide a report example using a consistent query set.

GEO builds on SEO foundations but shifts the target outcome. You still need crawlability, content quality, and authority. GEO adds AI surface measurement, citation optimization, and entity coverage.

A format-by-format breakdown of all nine ChatGPT ad formats, from confirmed live placements to emerging types. Includes crea...

ChatGPT launched ads on February 9, 2026. This guide covers how they work, who sees them, what they cost, how to track perfo...

AI tools now influence provider choice as much as referrals do. Learn how patient search has split into two lanes and what y...