9 ChatGPT ads creative formats

A format-by-format breakdown of all nine ChatGPT ad formats, from confirmed live placements to emerging types. Includes crea...

Most paid social campaigns that fail to scale share the same problem. The team increases the budget before the foundation is ready. They run into creative fatigue, algorithm disruptions, and rising CPMs with no system to fight back. The result is wasted spend and a performance plateau that feels impossible to break.

This article walks through the full scaling process: knowing when you are ready, choosing the right scaling method, managing creative at volume, using AI automation effectively, and measuring what actually drives results. Every section is grounded in current data and platform behavior so you can apply it directly to your campaigns.

Before increasing your budget, three signals need to be stable: your cost per acquisition has reached a consistent baseline at or below your target, at least one creative is clearly outperforming others, and your conversion tracking is capturing clean data. Scaling before these signals are in place does not accelerate growth. It accelerates waste.

Ready to grow your organic traffic?

Get a free SEO audit from the Launchcodex team.

Steven Dang, VP of Growth and Strategy at HawkSEM, puts it plainly. Scale happens when campaigns plateau on existing spend, or when there is a clear opportunity to expand into new audiences, geographies, or verticals. The worst time to scale is when performance is still unstable.

Look for all three before committing to higher spend:

Skipping the readiness check is the most common and most expensive mistake in paid social. Teams see a few strong days, increase spend, and watch performance collapse as the platform's learning phase resets. Budget increases of more than 20 to 30% at one time risk triggering this reset and can take days or weeks to recover.

A second failure point is scaling based on misleading data. If your attribution model is not capturing the full picture, you may be scaling campaigns that look profitable but are not, or cutting spend on channels that are actually driving conversions upstream.

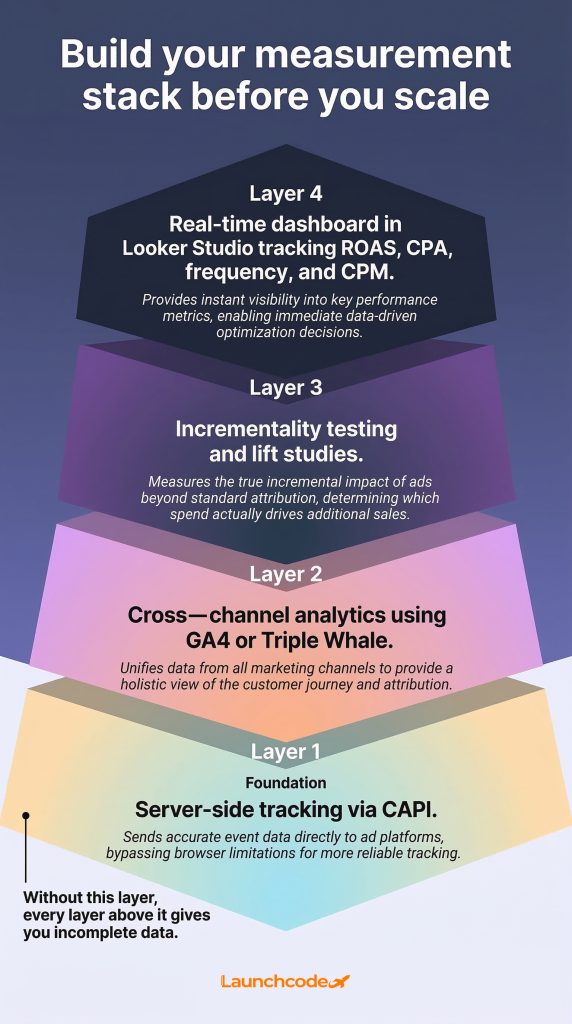

Clean conversion data is the most important prerequisite for scaling paid social. Without it, platform algorithms optimize for the wrong signals, AI-powered tools underperform, and budget decisions are based on incomplete information. Getting your tracking right is a continuous operational priority, not a one-time setup.

The iOS 14.5 update in 2021 permanently weakened browser-based pixel tracking across Meta and other platforms. Brands that still rely entirely on pixel data are feeding their campaigns degraded signals. The consequences compound at scale.

Meta's Conversion API (CAPI) sends conversion data directly from your server to Meta, bypassing browser limitations and ad blockers. It captures events the pixel misses and provides a cleaner signal for Meta's AI to optimize against.

For teams running Advantage+ campaigns, CAPI is not optional. The algorithm learns from the conversion data it receives. If that data is incomplete or delayed, the algorithm makes worse decisions with your budget, particularly as spend increases.

"We see it consistently. Brands that fix their conversion signal quality before scaling see Advantage+ perform the way it was designed to. Brands that skip it end up with an algorithm optimizing for the wrong outcomes at a much higher cost."

Olivia Tran, AVP, Media Services, Launchcodex

Last-click attribution assigns all conversion credit to the final touchpoint before a purchase. That model works poorly in multi-platform environments. TikTok's assisted conversion tracking, launched in October 2025, revealed that 79% of TikTok-attributed conversions were absent from last-click models. Teams using last-click data were cutting TikTok budget based on figures that missed most of the actual impact.

Tools like Triple Whale give e-commerce brands a multi-touch view across platforms so scaling decisions reflect actual contribution rather than last-click credit.

| Attribution model | What it measures | Scaling risk |

|---|---|---|

| Last-click | Final touchpoint only | Undervalues upper-funnel channels |

| Multi-touch | Contribution across all channels | More complex to set up |

| Incrementality | True causal lift from ads | Most accurate, requires lift studies |

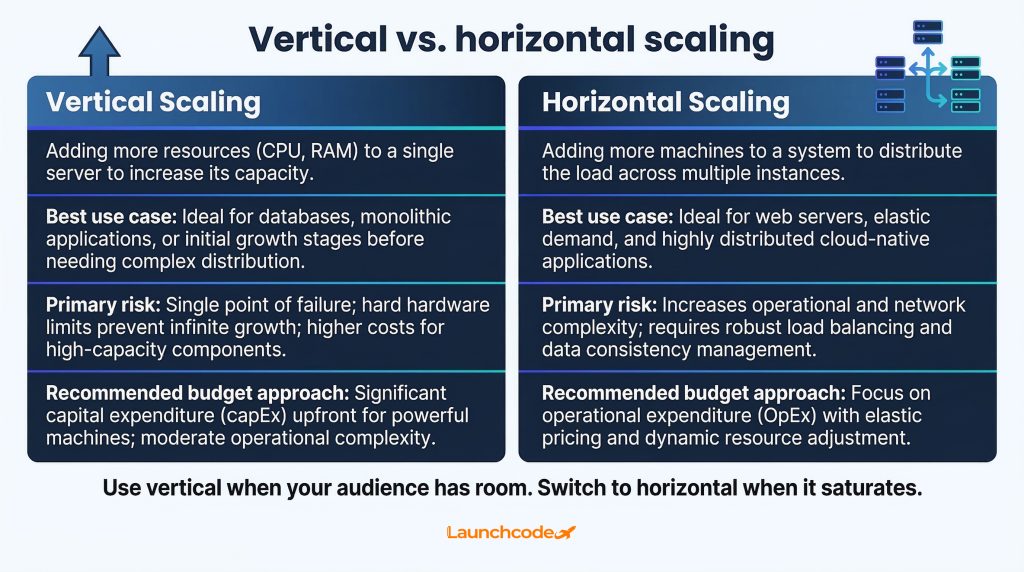

Vertical scaling means increasing the budget within an existing campaign. Horizontal scaling means expanding reach by duplicating campaigns to new audiences, placements, or platforms. Both work, but they suit different situations. Using the wrong method at the wrong time causes either audience saturation or algorithm disruption.

Understanding which method fits your current situation is the difference between growing efficiently and burning budget.

Vertical scaling works when your current audience is not yet saturated and your creative is still performing. The key rule: increase budgets in increments of no more than 20 to 30% every few days. Larger increases force the campaign back into a learning phase and temporarily spike your CPA.

Level Agency, a performance marketing agency, has scaled client campaigns to seven figures per month using this incremental approach. The method requires patience, but it protects existing performance while growing spend.

Horizontal scaling makes sense when you have exhausted the efficiency gains within your current audience and creative setup. You duplicate the winning ad set and target new audiences, such as lookalikes built from recent purchasers, email list segments, or interest-based groups that resemble your best customers.

Key steps for horizontal scaling:

Horizontal scaling also means testing new platforms. According to HubSpot's State of Marketing Report, paid social ranks as the second highest ROI channel for both B2B and B2C brands. Diversifying across platforms with proven creative is a direct path to incremental revenue.

Start platform expansion with a small test budget, around 10 to 15% of what you spend on your primary channel. Validate performance before committing significant spend.

| Scaling method | Best use case | Key risk | Budget approach |

|---|---|---|---|

| Vertical | Audience has room, creative is working | Algorithm reset if increased too fast | Max 20 to 30% increase every few days |

| Horizontal | Primary audience saturating | Creative may not translate to new segments | Duplicate and test at low spend first |

| Platform expansion | Proven creative to repurpose | New platform learning curve | 10 to 15% of primary platform spend |

Creative fatigue is the most common reason campaigns collapse at higher spend. When your audience sees the same ad too many times, engagement drops, costs rise, and ROAS falls, often before you realize what is happening. The answer is a system that continuously tests, refreshes, and rotates creative based on performance data, not a larger production budget.

Mirella Crespi, a paid social creative strategist who works with high-growth brands, is direct about what separates winning teams: "The brands that are winning on paid social are shipping a lot of creative fast. They are testing high volume and that's what makes them successful."

Fatigue does not always announce itself. Watch for these signals:

Once fatigue sets in, continuing to spend only accelerates the damage. The fix is rotation, not more budget.

Brands that refresh ad creative every four to six weeks see engagement rise by up to 38%. At higher spend levels, the cadence shortens. A campaign running $500 per day can sustain a creative for four to six weeks. A campaign at $5,000 per day may need fresh creative every two to three weeks.

Crespi frames creative testing as a structured discipline: "Every ad creative is a hypothesis that needs to be tested. Whenever you put budget behind your creative, you know exactly what you are learning."

Follow this process to keep fresh creative flowing without burning out your team:

A DTC apparel brand that applied this system, using dynamic product ads on Meta with regular creative rotation, grew their ROAS from 2.5x to 4x. The creative discipline drove the result, not the budget size.

| Tool | Primary use | Best for |

|---|---|---|

| Smartly.io | Creative automation and multi-channel scaling | Mid-market and enterprise teams |

| TikTok Symphony Creative Studio | AI-generated TikTok-native ad content | Brands scaling on TikTok |

| Meta GenAI tools | Creative variations within Advantage+ | Meta-focused campaigns |

| Madgicx | Creative optimization and fatigue prediction for Meta | E-commerce brands |

Meta Advantage+ and TikTok Smart+ automate targeting, placement, and budget allocation across their networks. They consistently outperform manual campaigns when given clean data and a strong creative library. Understanding how to feed these systems well is now a core paid social skill.

Global social media ad spend reached $207 billion in 2025, up 15% year over year. The brands capturing the most efficient share of that spend are using automation to scale faster than manual management allows.

Meta's internal data shows that Advantage+ campaigns drive up to 37% higher incremental conversions compared to manually managed campaigns. A separate Meta figure puts the ROAS lift at up to 22% versus traditional manual setups.

By Thanksgiving and Cyber Monday 2025, Advantage+ Shopping Campaigns accounted for 33% of all Meta retail and e-commerce spend, up from 26% the previous year. For teams scaling seriously, automated campaign management has become the default, not the exception.

Advantage+ performs best when you give it:

TikTok's Smart+ launched with a significant limitation: advertisers could not adjust targeting mid-campaign without restarting from scratch. In October 2025, TikTok overhauled Smart+ with modular controls, allowing teams to automate budget and placement while keeping manual control over targeting.

David Kaufman, TikTok's Head of Product and Client Solutions for North America, described the direction: "TikTok is building automation you can customize and trust. We've been listening closely to our advertising partners and developing solutions that combine AI with controls designed for every business need."

By late 2025, 42% of US TikTok performance campaigns were running on Smart+ automated solutions, up from just 9% at the start of the year.

Automating everything at once is a common mistake. A more reliable approach is to run a manual "sandbox" campaign alongside your automated campaigns. Use the sandbox to test new creative angles and audience hypotheses. When an angle proves itself in the sandbox, move it into Advantage+ or Smart+ to scale at volume. The algorithm is effective at distributing budget efficiently. It is not designed to discover new creative directions.

At low spend, last-click attribution gives a workable picture. At scale, it actively misleads. As budgets grow across multiple platforms and funnel stages, you need a measurement framework that captures the full contribution of each channel. Getting this wrong means scaling the wrong campaigns and cutting the ones that are actually driving results.

The 79% attribution gap found in TikTok's assisted conversion data is not a TikTok-specific problem. It reflects a structural limitation in last-click measurement that becomes more damaging as spend and complexity increase.

A functional measurement stack at the scaling stage typically includes:

Businesses using professional optimization tools see a 25 to 40% improvement in campaign performance. The tooling is not the strategy, but without it, the data needed to make good scaling decisions does not exist in a usable form.

"The moment a brand starts running spend across two or more platforms, blended reporting becomes non-negotiable. We've seen teams cut budgets on channels that were generating 40% of pipeline, purely because last-click didn't show the contribution."

Tanner Medina, Co-Founder and Chief Growth Officer, Launchcodex

| Metric | Healthy range | Action signal |

|---|---|---|

| Frequency | Under 3 per week | Refresh creative above this threshold |

| CPA trend | Stable or improving | Investigate if rising more than 15% week over week |

| ROAS | At or above target | Scale back if below target for 7 or more consecutive days |

| CPM | In line with benchmarks | Rising CPM with flat creative signals audience saturation |

B2B and SaaS brands face a more complex measurement challenge. Sales cycles stretch over weeks or months, which means last-click attribution almost never captures the full picture. For these brands, tracking lead quality, pipeline contribution, and conversion rates from lead to closed deal matters more than raw CPA. Scaling decisions should draw from CRM data and lead-to-opportunity rates, not just platform-reported lead volume.

Scaling paid social is a sequence of decisions, each one dependent on having the right foundation in place before taking the next step. Budget is the last variable to increase, not the first.

The brands that scale profitably share a common structure. They invest in clean data before increasing spend. They build creative systems before creative fatigue catches them. They use AI automation as a multiplier for a strategy that already works, not a shortcut around one that does not.

At Launchcodex, paid social scaling follows this sequence: validate performance at current spend, fix attribution and data pipelines, build a creative rotation system, then use platform automation to accelerate what is already working. The framework applies whether a client is spending $5,000 per month or $500,000.

The practical starting point is a simple audit. Check your attribution setup, review your creative refresh cadence, and confirm that your CPA has held stable for at least two to three weeks. If all three are in order, the budget increase becomes straightforward.

Vertical scaling means increasing the budget on an existing campaign or ad set. Horizontal scaling means duplicating campaigns to reach new audiences, placements, or platforms. Vertical works when your current audience still has room. Horizontal works when that audience is saturating or you want to reduce platform risk.

Increase budgets by no more than 20 to 30% at a time. Larger increases reset the platform's learning algorithm and can cause CPA to spike temporarily while the system recalibrates. Wait several days for performance to stabilize before increasing again.

Watch frequency and ROAS trends rather than a fixed calendar. A general benchmark is to refresh creative every four to six weeks, but high-spend campaigns may need new creative every two to three weeks. Pull an ad when frequency exceeds three impressions per user per week or when ROAS starts declining without other changes.

Meta's internal data shows Advantage+ campaigns deliver up to 37% higher incremental conversions compared to manually managed campaigns. Results depend on the quality and diversity of the creative library and the strength of the conversion signals fed to the algorithm via Conversion API.

The Conversion API (CAPI) is a server-side tracking tool that sends conversion events directly from your server to Meta, bypassing browser limitations and ad blockers. At scale, clean conversion data is essential for platform algorithms to optimize correctly. Without CAPI, the algorithm works with incomplete signals and makes less accurate bidding and delivery decisions.

Look for three signals: a stable CPA held at or below target for at least two to three weeks, at least one creative that consistently outperforms others, and a tracking setup that captures accurate conversion data. Scale only when all three are in place.

A format-by-format breakdown of all nine ChatGPT ad formats, from confirmed live placements to emerging types. Includes crea...

ChatGPT launched ads on February 9, 2026. This guide covers how they work, who sees them, what they cost, how to track perfo...

AI tools now influence provider choice as much as referrals do. Learn how patient search has split into two lanes and what y...